As organizations move beyond single-model deployments toward agentic AI systems, traditional cybersecurity approaches are being pushed to their limits. Autonomous AI agents can reason, plan, call APIs, interact with other agents, and execute workflows in real time—dramatically expanding the attack surface. This shift makes agentic AI threat modeling a critical discipline for security teams, AI engineers, and governance leaders.

This article provides an in-depth guide to agentic AI threat modeling: what it is, why it matters, how agentic AI changes threat modeling strategies, and how organizations can effectively integrate autonomous agents into existing security and risk management processes.

What Is Agentic AI Threat Modeling?

Agentic AI threat modeling is the structured process of identifying, analyzing, and mitigating security risks introduced by autonomous AI agents across their full lifecycle. It extends traditional threat modeling frameworks—such as STRIDE, MITRE ATT&CK, and OWASP—to account for AI-specific threats, including dynamic decision-making, agent interactions, and emergent behaviors.

Unlike conventional software systems, agentic AI systems are not limited to deterministic logic. They rely on:

- Large language models (LLMs) and foundation models

- Tool and API execution

- Multi-agent collaboration

- Continuous learning and adaptation

- Real-time decision-making

Threat modeling in this context focuses not only on static vulnerabilities, but also on how agent behavior, permissions, training data, and outputs can be exploited by adversaries.

At its core, agentic AI threat modeling aims to answer four questions:

- What can this agent do?

- What could go wrong?

- How could it be exploited in real-world conditions?

- What mitigations and security controls are required?

How Does Agentic AI Influence Threat Modeling Strategies?

Agentic AI fundamentally reshapes how threat modeling must be performed. Traditional models assume predictable execution paths and tightly scoped permissions. Agentic systems break both assumptions.

Expanding the Attack Surface

Agentic AI dramatically increases the attack surface by introducing:

- API access across internal and external systems

- Autonomous execution of workflows

- Chained agent interactions

- Integration with open-source tools and agent frameworks

Each additional capability introduces new dependencies, trust boundaries, and failure modes that must be modeled explicitly.

From Static Flows to Dynamic Behaviors

Traditional threat modeling focuses on static data flows and application boundaries. Agentic AI requires modeling dynamic decision trees, where agents select actions based on context, memory, and model reasoning.

Security teams must consider:

- How agents decide which tools to use

- How prompts and intermediate outputs influence decisions

- How agents react to unexpected or adversarial inputs

This makes explainability and observability essential components of modern AI security.

How Can Agentic AI Systems Pose Unique Challenges in Threat Modeling?

Agentic AI introduces a set of specific risks that do not map cleanly to legacy application security models.

1. Prompt Injection and Adversarial Attacks

Prompt injection remains one of the most significant vulnerabilities in agentic AI systems. Malicious prompts can manipulate agent behavior, override guardrails, or trigger unauthorized actions—especially when agents are connected to APIs or automation tools.

In agentic workflows, prompt injection risks are amplified by:

- Tool invocation

- Agent-to-agent communication

- Long-running sessions with memory

2. Goal Misalignment and Emergent Behavior

Autonomous agents may pursue objectives in unintended ways, leading to goal misalignment. Even without malicious input, agents can:

- Exceed intended permissions

- Generate unsafe outputs

- Automate harmful decisions

Threat modeling must account for non-adversarial failures as part of risk management.

3. Supply Chain and Dependency Risks

Agentic AI systems rely heavily on:

- Open-source libraries

- Foundation models from providers like OpenAI

- External APIs and plugins

These dependencies introduce supply chain risks, including tampering, data poisoning, and impersonation attacks.

4. Authorization and Access Control Failures

Many AI agents operate with broad permissions to enable automation. Without strict access controls and least-privilege design, attackers can exploit agents for:

- Data exfiltration

- Privilege escalation

- Denial of service

How Can Threat Modeling Help Mitigate Risks Associated With Agentic AI?

Effective threat modeling provides a structured way to identify vulnerabilities early and apply targeted mitigations before agents are deployed into production environments.

Applying Established Threat Modeling Frameworks

While agentic AI introduces new challenges, existing frameworks remain valuable when adapted correctly.

- STRIDE helps identify spoofing, tampering, repudiation, information disclosure, denial of service, and elevation of privilege in agent workflows.

- OWASP provides guidance on AI-specific vulnerabilities, including model abuse and insecure integrations.

- MITRE techniques help map adversarial behaviors and attack paths in real-world scenarios.

Security engineers should extend these frameworks to include AI-specific threats such as prompt manipulation, model inversion, and agent impersonation.

Enabling Defense-in-Depth for AI Agents

Threat modeling supports a defense-in-depth strategy by identifying where layered security controls are required, including:

- Input validation and output filtering

- Permission boundaries at the API level

- Guardrails for model behavior

- Runtime monitoring and continuous monitoring

These controls reduce the likelihood that a single failure leads to systemic compromise.

Supporting Red Teaming and Validation

Threat modeling informs AI red teaming efforts by defining realistic attack scenarios against agentic systems. Red teams can simulate:

- Adversarial prompts

- Data poisoning attempts

- Abuse of agent interactions

- Multi-agent exploitation paths

The results feed back into improved validation, policy enforcement, and security controls.

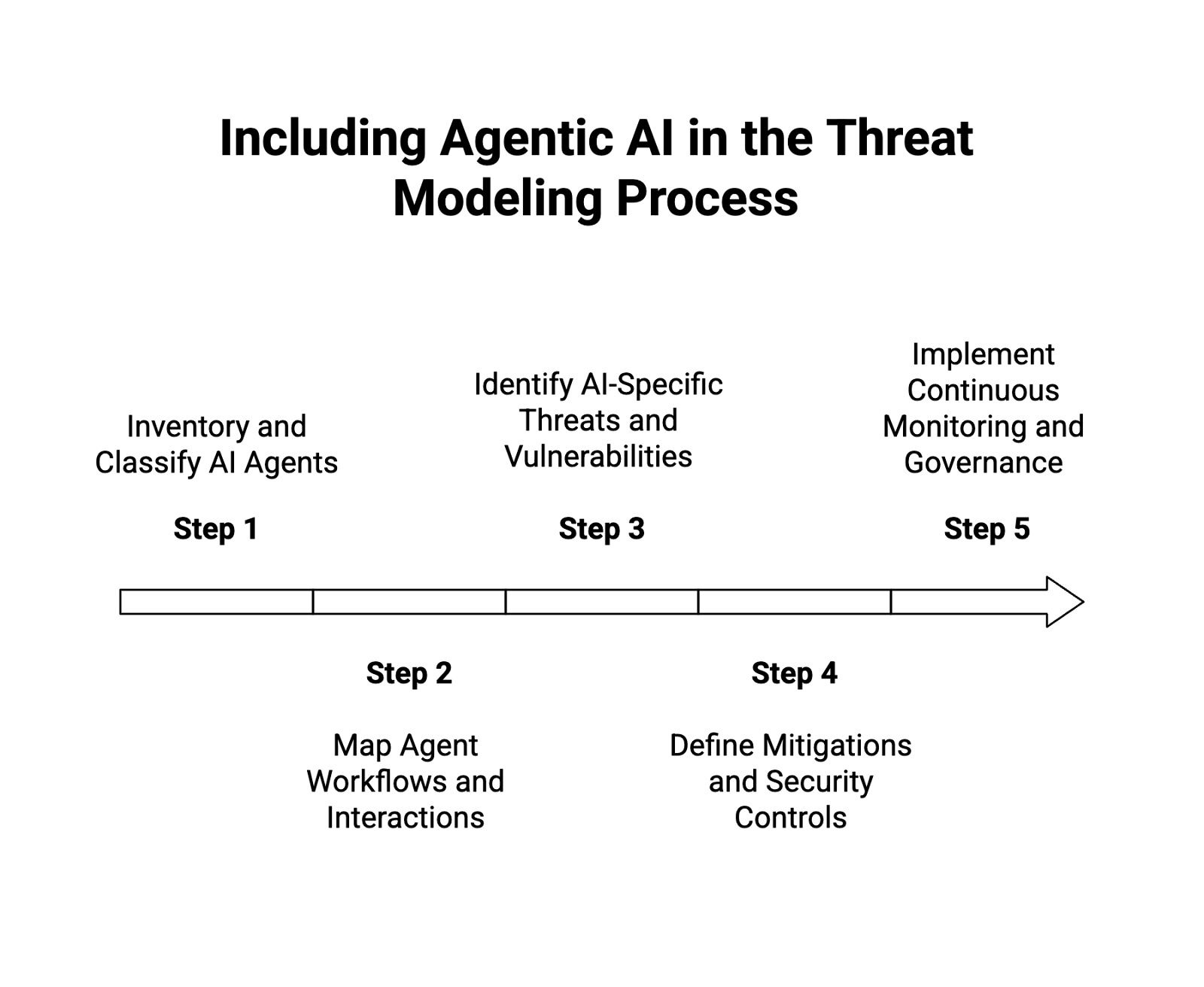

How Can Agentic AI Systems Be Effectively Included in Threat Modeling Processes?

To be effective, agentic AI threat modeling must be integrated into existing security and development lifecycles—not treated as a one-time exercise.

Step 1: Inventory and Classify AI Agents

Start by identifying all AI agents in use across the organization, including:

- Autonomous agents

- Multi-agent systems

- Embedded GenAI capabilities in applications

For each agent, document:

- Use cases

- Decision-making authority

- Access to data and APIs

- Dependencies and agent frameworks

This inventory forms the foundation for risk analysis.

Step 2: Map Agent Workflows and Interactions

Threat modeling must account for agent interactions, not just individual agents. Map:

- Inputs and outputs

- Tool and API calls

- Agent-to-agent communication

- Human-in-the-loop touchpoints

This step reveals trust boundaries and highlights where adversarial attacks could be introduced.

Step 3: Identify AI-Specific Threats and Vulnerabilities

Using the mapped workflows, security teams should identify:

- Prompt injection paths

- Data poisoning risks

- Model abuse scenarios

- Impersonation and spoofing threats

- Denial of service risks from uncontrolled automation

This analysis should consider both malicious actors and unintended behaviors.

Step 4: Define Mitigations and Security Controls

For each identified risk, define actionable mitigations, such as:

- Strict permission scoping for APIs

- Role-based access controls for agent actions

- Guardrails on model outputs

- Rate limiting and anomaly detection

- Auditability and logging for agent decisions

Mitigations should be tied directly to the AI agent lifecycle, from development to runtime.

Step 5: Implement Continuous Monitoring and Governance

Agentic AI systems evolve over time, making continuous monitoring essential. Effective programs include:

- Real-time observability into agent behavior

- Logging of prompts, decisions, and outputs

- Alerts for policy violations or anomalous actions

- Periodic reassessment of threat models

This ensures that threat modeling remains relevant as agents and use cases change.

Case Study: Threat Modeling a Multi-Agent Workflow

Consider a real-world case study involving a multi-agent system used for automated customer support and internal ticket triage.

Identified Risks

- Prompt injection via customer inputs

- Unauthorized API calls to internal systems

- Data leakage through agent-generated summaries

- Supply chain risks from open-source agent frameworks

Applied Mitigations

- Input sanitization and contextual validation

- Scoped API permissions per agent role

- Output filtering for sensitive data

- Continuous monitoring of agent behavior

By applying agentic AI threat modeling early, the organization reduced its exposure to adversarial attacks while maintaining automation benefits.

The Role of Security Teams in Agentic AI Threat Modeling

Agentic AI threat modeling is not solely an AI engineering responsibility. Security teams play a central role by:

- Defining threat modeling standards

- Selecting appropriate frameworks

- Conducting red teaming exercises

- Enforcing governance and policy controls

Collaboration between AI developers, security engineers, and risk management teams is essential for scalable, secure adoption of autonomous AI.

Conclusion: Why Agentic AI Threat Modeling Is Now Essential

As autonomous AI becomes embedded in enterprise workflows, agentic AI threat modeling is no longer optional. It provides the structure needed to understand AI-specific threats, reduce vulnerabilities, and implement effective security controls across complex agent ecosystems.

Organizations that invest in rigorous threat modeling will be better positioned to:

- Secure AI agents at scale

- Reduce real-world security incidents

- Maintain compliance and auditability

- Build trust in autonomous AI systems

In an era of rapidly evolving AI capabilities, proactive threat modeling is the foundation of responsible and resilient AI security.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.