What is Auditing in AI?

AI auditing refers to the systematic process of assessing, verifying, and validating artificial intelligence (AI) systems to ensure they operate in compliance with ethical, regulatory, and organizational standards. Similar to traditional financial or operational audits, AI auditing provides an independent evaluation of how algorithms and machine learning models make decisions, manage data, and impact stakeholders.

An AI audit examines various components of the AI lifecycle—from data collection and model training to deployment and monitoring—to ensure transparency, accountability, and trustworthiness. This includes evaluating datasets, algorithms, and outputs for accuracy, fairness, explainability, and compliance with privacy regulations like the GDPR or the EU AI Act.

As organizations increasingly deploy AI-powered tools such as chatbots, predictive models, and generative AI applications, auditing plays a crucial role in identifying hidden biases, security vulnerabilities, and data protection issues that could expose organizations to reputational or legal risks.

Why is AI Auditing Important?

The growing integration of AI systems across sectors such as healthcare, financial services, and government brings immense potential—but also heightened risks. These risks include algorithmic bias, lack of transparency in decision-making, and exposure of personal or sensitive data. AI auditing helps mitigate these threats through independent oversight and structured risk assessment.

Key reasons AI auditing is essential include:

- Regulatory Compliance – With regulations like the EU AI Act and national privacy laws tightening, organizations must demonstrate responsible AI practices through verifiable audit trails.

- Ethical Accountability – Auditing reinforces ethical AI by ensuring systems adhere to fairness, explainability, and human oversight principles.

- Operational Assurance – Regular audits help maintain system reliability and performance, preventing degradation in machine learning models over time.

- Risk Management – Auditing identifies risks early in the AI lifecycle, from data quality issues to cybersecurity vulnerabilities, enabling timely mitigation.

- Trust and Transparency – Independent auditing enhances stakeholder trust in AI outputs by providing clear documentation and interpretability of decision-making processes.

Ultimately, AI auditing bridges the gap between technical innovation and governance, ensuring that AI technologies are deployed responsibly, safely, and in alignment with societal values.

What Are the Key Steps in an AI Auditing Process?

Conducting an effective AI audit requires a structured and repeatable methodology. While the specific scope varies by industry and use case, most AI audits include the following key steps:

- Define the Scope and Objectives

The audit begins by defining what will be examined—such as a chatbot, risk model, or generative AI system—and identifying relevant stakeholders, risks, and compliance requirements. - Map the AI Lifecycle

Document the full AI lifecycle from data collection and model design to deployment and post-market monitoring. This ensures comprehensive visibility into all operational and ethical touchpoints. - Data Assessment

Evaluate training data and datasets for quality, completeness, and potential bias. Data provenance and preprocessing methods are reviewed for compliance with data protection and privacy laws. - Model Evaluation

Analyze the AI model’s algorithms for accuracy, robustness, and explainability. This includes examining model documentation, validation metrics, and results reproducibility. - Governance and Risk Controls

Review organizational governance frameworks, access controls, and internal policies governing AI use. Assess alignment with risk management frameworks such as NIST AI RMF or ISO/IEC 42001. - Compliance Review

Verify compliance with relevant auditing standards, legal frameworks (e.g., GDPR, AI Act), and sector-specific guidelines (e.g., financial services or healthcare). - Reporting and Recommendations

Summarize findings in a detailed audit report, providing clear insights into risks, deficiencies, and corrective actions. Recommendations often include updates to data handling, model governance, and documentation practices. - Follow-Up and Continuous Monitoring

AI auditing is not a one-time exercise. Continuous or periodic audits help ensure sustained compliance and adaptability as models evolve through retraining and real-world use.

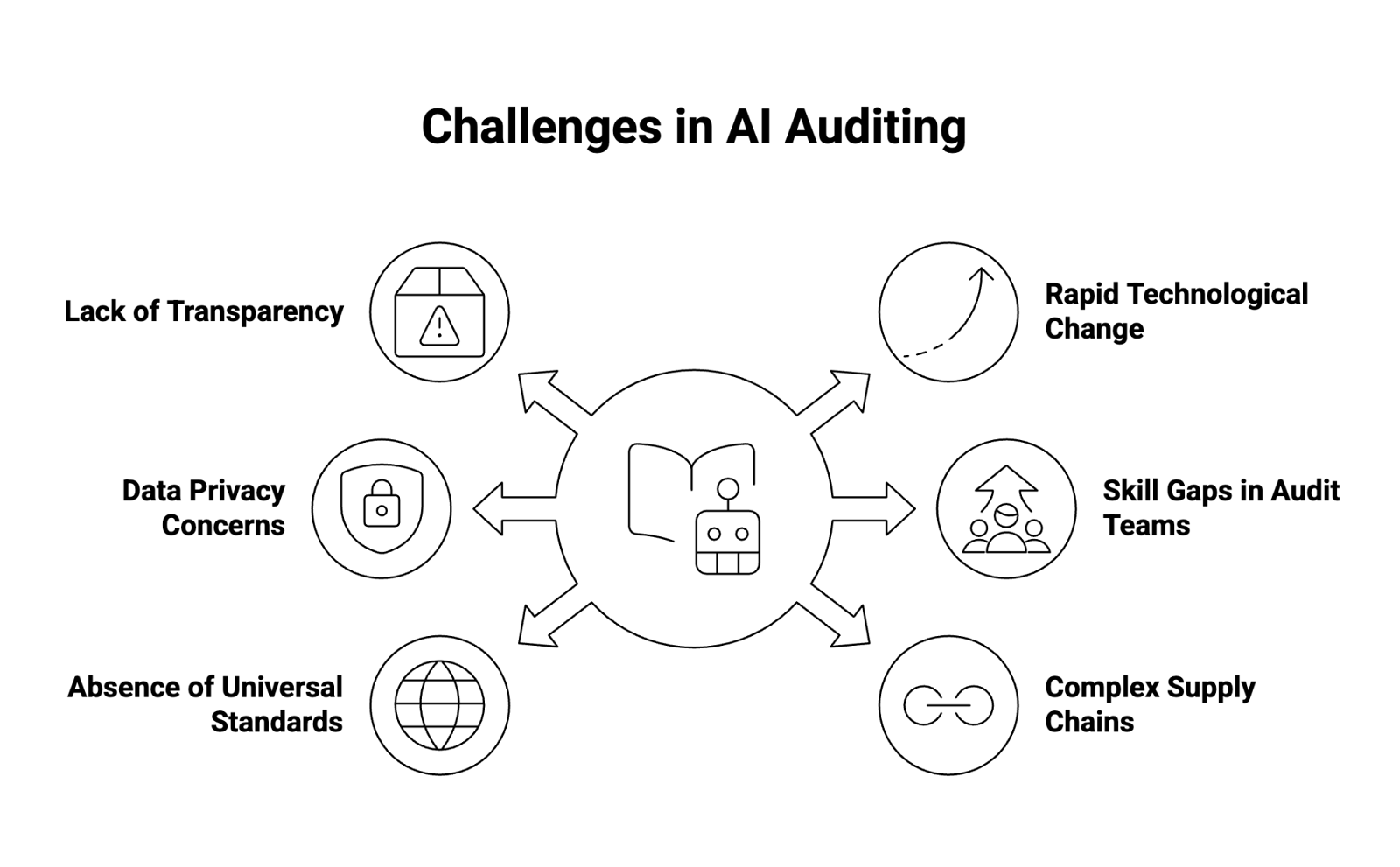

What Are the Key Challenges in Conducting an AI Audit?

AI auditing is complex, often requiring interdisciplinary expertise across data science, ethics, cybersecurity, and law. Some of the most common challenges include:

- Lack of Transparency – Many AI models, particularly deep learning or generative AI, function as “black boxes,” making it difficult for auditors to interpret internal decision logic.

- Rapid Technological Change – The pace of AI innovation often outstrips the development of standardized auditing methodologies.

- Data Privacy Concerns – Accessing training data or proprietary datasets for audit purposes can raise data protection and confidentiality issues.

- Skill Gaps in Audit Teams – Traditional internal auditors may lack technical expertise in AI or machine learning model evaluation.

- Absence of Universal Standards – While several frameworks exist, there is no globally accepted standard for AI auditing, leading to inconsistencies across industries.

- Complex Supply Chains – Many AI systems rely on third-party data or model providers, complicating accountability and traceability across the AI lifecycle.

Addressing these challenges requires a combination of upskilling, governance reforms, and alignment with established frameworks to bring structure and consistency to the audit process.

What Are AI Auditing Frameworks?

AI auditing frameworks are structured methodologies that guide organizations in evaluating AI systems through defined principles, controls, and processes. They help ensure consistency, completeness, and repeatability in the auditing process.

Why Use a Framework?

Using an established auditing framework ensures that audits are not only comprehensive but also aligned with recognized governance frameworks and risk management standards. Benefits include:

- Improved comparability across AI systems and organizations

- Consistent evaluation criteria for auditors and regulators

- Easier identification of compliance gaps and risk exposure

- Clear alignment with global best practices in cybersecurity, data privacy, and AI governance

Frameworks serve as both methodology and benchmark, guiding audit teams through each step of the evaluation process.

Top AI Auditing Frameworks for Internal Audit

Several international and national frameworks have emerged to help internal auditors and organizations evaluate and govern their AI initiatives. Below are five leading frameworks used in AI auditing:

1. COBIT Framework

The COBIT (Control Objectives for Information and Related Technologies) framework, developed by ISACA, provides a comprehensive governance model for IT systems, now extended to AI oversight. COBIT helps auditors assess AI risk, control environments, and data governance practices. It emphasizes accountability, data integrity, and compliance alignment across enterprise systems—critical elements when integrating AI-powered automation.

2. COSO ERM Framework

The Committee of Sponsoring Organizations (COSO) Enterprise Risk Management framework offers a structured approach to identifying and managing risks within AI initiatives. COSO’s model is widely adopted in financial services and other regulated sectors for its integration of risk assessment, governance, and performance metrics. When applied to AI, COSO supports evaluating algorithmic risks, ethical considerations, and decision-making transparency.

3. U.S. Government Accountability Office (GAO) AI Framework

The GAO AI Accountability Framework (2021) was designed to guide government agencies in the responsible use of AI. It outlines four key principles: governance, data, performance, and monitoring. The framework provides a practical checklist for audit teams to assess AI models’ fairness, privacy protections, and explainability—making it one of the most detailed public-sector tools for algorithmic auditing.

4. IIA Artificial Intelligence Auditing Framework

Developed by the Institute of Internal Auditors (IIA), this framework helps organizations embed AI auditing within their broader internal audit function. It offers a risk-based approach to evaluating AI initiatives, emphasizing continuous monitoring, stakeholder engagement, and cross-disciplinary collaboration. The IIA framework also highlights the importance of aligning AI audits with corporate governance and compliance obligations.

5. Singapore PDPC Model AI Governance Framework

Created by Singapore’s Personal Data Protection Commission (PDPC), this Model AI Governance Framework provides practical guidance on implementing responsible AI principles. It addresses data quality, human oversight, and personal data protection, making it particularly relevant for high-risk AI applications and cross-border compliance. The PDPC framework has become a regional benchmark for balancing innovation with accountability.

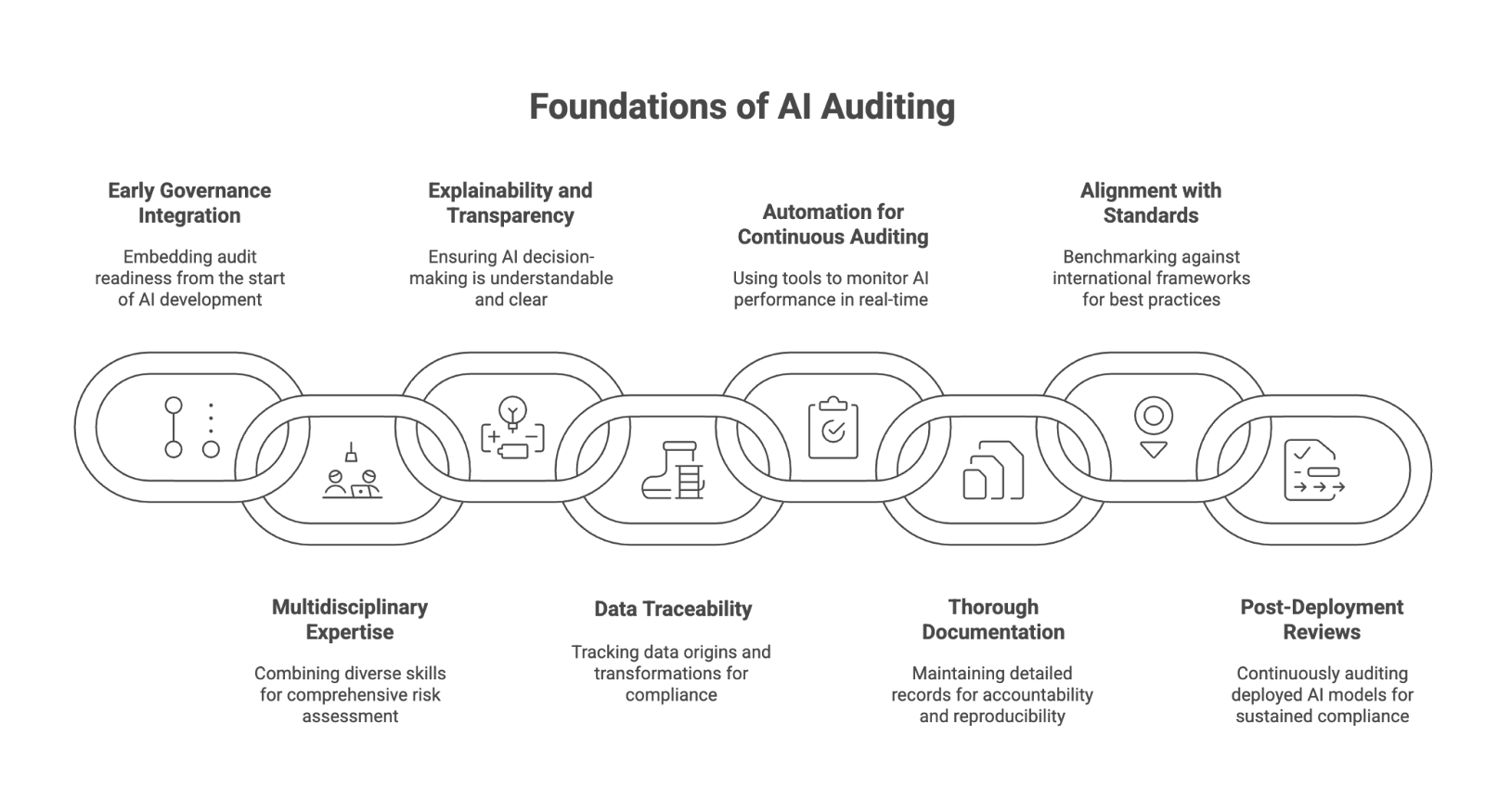

Best Practices for Auditing AI Systems

To ensure effective and reliable auditing, organizations should adopt a set of best practices grounded in technical rigor, ethical principles, and regulatory alignment.

- Integrate AI Governance Early

Embed audit readiness from the outset of AI development, ensuring that governance structures and documentation are established before deployment. - Adopt a Multidisciplinary Approach

Combine expertise from data science, cybersecurity, ethics, and internal audit to achieve a well-rounded risk perspective. - Prioritize Explainability and Transparency

Require that models—especially machine learning and generative AI systems—include interpretable mechanisms or documentation explaining how decisions are made. - Maintain Data Traceability

Track the origin, transformation, and use of all datasets to ensure compliance with data privacy laws and prevent quality degradation. - Leverage Automation for Continuous Auditing

Use automated monitoring tools to track AI performance metrics, flag anomalies, and support real-time auditing of AI systems in production. - Document the Audit Trail Thoroughly

Keep detailed logs of methodologies, findings, and decisions to enable reproducibility and accountability for all stakeholders. - Align with Recognized Standards

Follow international frameworks like NIST AI RMF, ISO/IEC 42001, or COBIT to benchmark performance against best practices and emerging regulations. - Perform Post-Deployment Reviews

Continuously audit deployed AI models for drift, bias, or unexpected outputs to ensure sustained compliance and performance integrity.

By institutionalizing these practices, organizations can enhance their AI governance posture, improve risk analysis, and build long-term trust in their AI initiatives.

Conclusion

As AI systems become more embedded in critical decision-making, AI auditing has evolved into a vital component of responsible technology governance. Through structured methodologies, recognized frameworks, and continuous oversight, organizations can manage AI risk, ensure transparency, and uphold compliance with global standards.

A robust AI audit program not only strengthens trust among stakeholders but also enables businesses to innovate confidently—balancing automation and accountability across the AI lifecycle.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.