Many organizations are already running both agentic AI and generative AI, whether or not they’ve sanctioned it. Even without a formal rollout, employees are likely using generative AI tools, agentic AI assistants, or both.

While agentic AI and generative AI share similar origins, they are fundamentally different technologies with distinct risk profiles. The controls that apply to one don’t automatically transfer to the other. That reality means enterprise security architectures must be prepared to handle the distinct vulnerabilities that come from both to protect your organization’s AI footprint.

Key Takeaways

- Generative AI produces content via a request-response model, while Agentic AI operates autonomously with persistent memory and access to tools.

- Agentic AI inherits all the risks of generative AI risk and adds new ones: expanded attack surfaces through MCP servers, cascading multi-agent failures, and more.

- Traditional security solutions can secure infrastructure and data flows, but not intent and autonomous behavior.

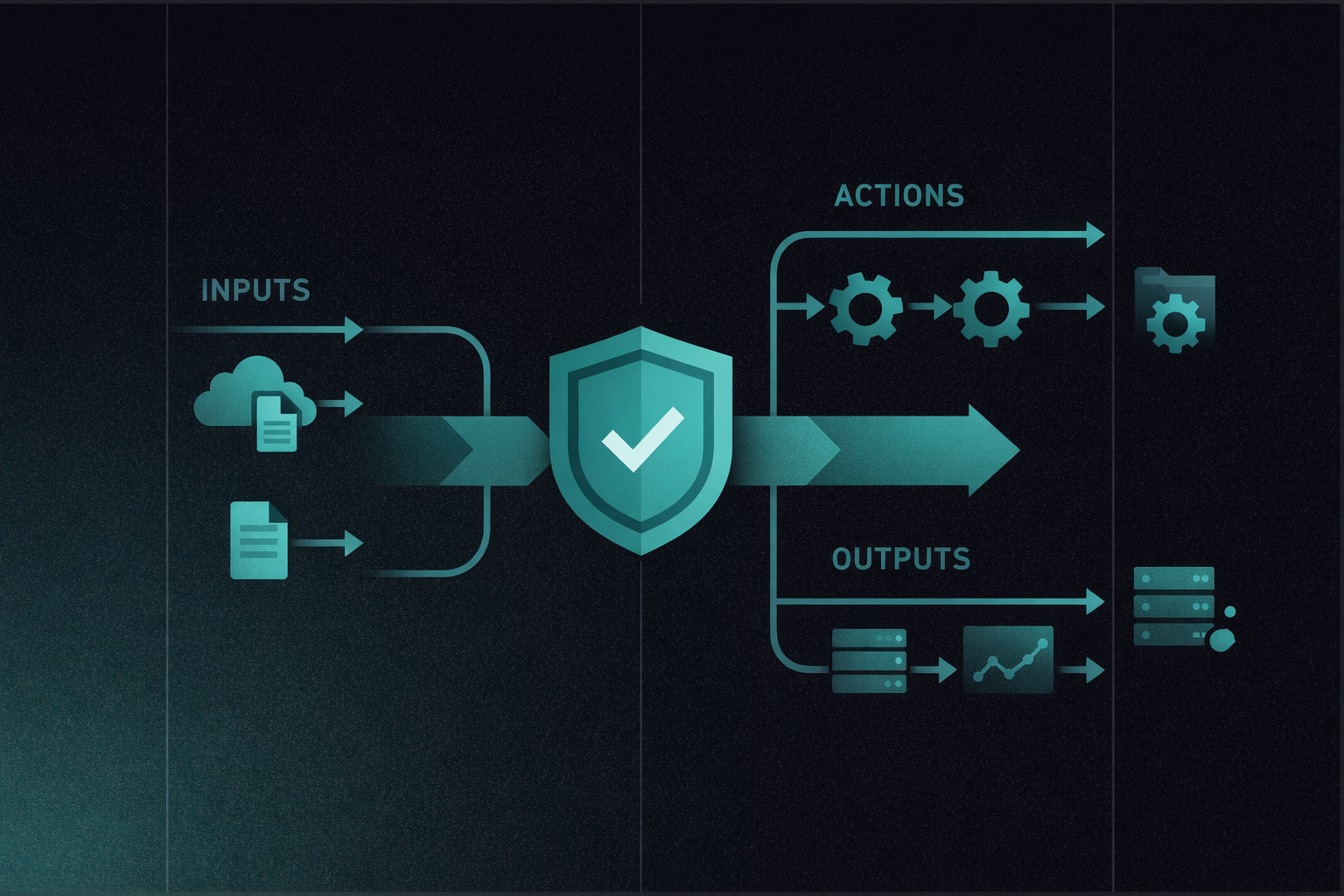

- Enterprise AI security requires three capabilities working together: unified visibility across human employees and AI agents, intelligent intent-based policies that understand context, and runtime defense that inspects prompts, responses, and tool calls before they execute.

Agentic AI vs. Generative AI: Key Differences

Generative AI creates content, while agentic AI takes action, and that distinction determines the risk category your organization faces.

What Is Generative AI?

Generative AI is a category of artificial intelligence that uses LLMs to produce new content (text, images, code, and analysis) based on user inputs. It operates through a reactive request-response pattern: a user submits a prompt, the model generates an output, and a human decides what to do with it.

The risks of using generative AI solutions include hallucinations, data leakage, and potential bias in model training, which may lead to inconsistent business decisions. However, these risks share a common characteristic: a human sits between the AI’s output and any real-world consequence.

What Is Agentic AI?

Agentic AI refers to autonomous systems that perceive, reason, and act in digital environments to achieve goals on behalf of human principals. These systems use tools, conduct economic transactions, and engage in strategic interaction, all with minimal human oversight.

Predictions estimate that by 2028, 33% of enterprise software applications will include agentic AI. The security risk profile shifts from “what did the model say?” to “what did the agent do?”

The Core Differences Between Generative AI and Agentic AI at a Glance

| Dimension | Generative AI | Agentic AI |

| Core function | Content creation (text, images, code) | Action execution and autonomous decision-making |

| Autonomy | Low; requires a prompt for every action | High; operates independently toward goals |

| Human involvement | Human-in-the-loop: reviews output before use | Human-on-the-loop; monitors with audit trails |

| Tool and system access | Minimal; primarily interfaces with users | Extensive; integrates with APIs, databases, and external systems |

| Memory | Stateless or session-temporary | Persistent memory and state across interactions |

| Primary risk type | Content risks (misinformation, hallucinations, bias, IP violations) | Action risks (unauthorized operations, cascading failures, API abuse) |

| Attack surface | The prompt and the response | Tools, context windows, memory, execution environments |

How Generative and Agentic AI Work Together

Agentic AI doesn’t replace generative AI; it’s built on top of it. The LLM serves as the cognitive reasoning engine inside every agent, while tool-calling and the Model Context Protocol (MCP) provide the connective tissue between reasoning and real-world action.

MCP is an open standard for connecting AI systems with data sources and tools, reducing fragmented integrations. MCP servers expose resources (context and data), prompts (templated workflows), and tools (executable functions). Through tool-calling, the model identifies when it needs an external function, returns structured parameters, and continues reasoning with the results. This architecture is powerful, but it means every MCP server connection, every tool call, and every reasoning loop becomes a potential point of compromise.

Security Risks of Generative and Agentic AI

The security risks of generative AI are mostly around what the model produces and what data it ingests. Agentic AI inherits all of those risks and adds a new dimension, action, where the combination of sensitive data access, untrusted content exposure, and external actuation exponentially expands the surface area for potential attacks.

Security Risks Associated With Generative AI

Generative AI risks are predominantly content risks that stem from what the model produces or what it’s exposed to during use.

- Prompt injection: In generative AI, prompt injection targets a single response. An attacker manipulates the input to override the model’s instructions and produce unintended output. The blast radius is limited to that one interaction, but it can still result in data extraction, policy bypass, or misleading outputs.

- Data leakage through prompts: Employees routinely paste sensitive information (source code, customer data, financial details, strategic plans) into generative AI prompts. That data may be stored, logged, or used for model training by third-party providers.

- Hallucinations and misinformation: Generative AI models can produce outputs that are fluent, confident, and wrong. Such hallucinated content can create operational and reputational risk when it enters reports, customer communications, or decision-making workflows.

- Bias and discrimination: Model outputs can reflect and amplify biases present in training data. In hiring, lending, customer service, and other high-stakes contexts.

Security Risks Associated With Agentic AI

Agentic AI inherits all generative AI risks above and introduces entirely new categories tied to autonomous action, tool access, and multi-agent coordination. Four areas stand out.

1. Expanded Attack Surface Through Tool Access and MCP Servers

Every tool an AI agent can invoke is a potential entry point, so agentic AI broadens the attack surface. Worse still, malicious instructions embedded in a tool’s description or metadata can execute alongside legitimate operations. That means a tool can appear to do the right thing while quietly triggering credential theft or data exfiltration.

2. Prompt Injection Becomes Prompt Hijacking

In agentic systems, prompt injection escalates into prompt hijacking, a qualitative shift with fundamentally different consequences. Unlike a one-shot chatbot exploit, agent hijacking persists across sessions through memory systems, requires no continuous attacker interaction, and exploits the legitimate permissions the agent already possesses.

3. Cascading Failures Across Multi-Agent Systems

Cascading failures happen when one agent generates hallucinated output that downstream agents interpret as legitimate input, creating chain reactions that undermine the integrity of the broader multi-agent ecosystem.

The temporal dimension makes this particularly dangerous: in human organizations, cascading failures develop over hours, days, or weeks, giving leadership time to detect and intervene. In multi-agent AI systems, the same cascade can complete in seconds.

4. The Identity Problem: Agents Don’t Have Badges

Agents create IAM challenges that traditional OAuth-based frameworks struggle to resolve for highly autonomous systems.

For example, Agent A authenticates with a user’s credentials, delegates to Agent B, which spawns Agent C. Traditional IAM can tell you who authenticated and what was accessed. However, it cannot answer why Agent C needed access to financial records or whether the original user authorized that downstream action.

How to Mitigate the Security Risks of Generative AI and Agentic AI

Closing the gap between legacy security architectures and these AI risks requires three capabilities that work together as a unified framework.

1. Visibility Across the Entire AI Workforce

Security teams need a single view of every AI interaction, whether it’s an employee prompting ChatGPT, a developer using GitHub Copilot in an IDE, or an autonomous agent making tool calls through MCP servers.

That visibility includes maintaining an internal AI inventory documenting AI models, agents, and data, including any unsanctioned or shadow AI usage. The visibility must extend to native applications, embedded copilots, and agent-to-agent communication, not just browser-based tools.

WitnessAI provides this kind of visibility at enterprise scale: network-level discovery of AI activity across human employees and autonomous agents, automatic detection of agentic plugins and MCP connections, and a continuously updated catalog covering more than 4,000 AI applications, without requiring browser extensions, endpoint clients, or SDK modifications.

2. Intent-Based Policies, Not Pattern Matching

AI policy enforcement works when it understands meaning, not just strings. Pattern matching fails because AI interactions are conversational, context-dependent, and evolve across sessions.

Here’s what intent-based policy enforcement needs to look like:

- Treat AI risk as behavioral, not just data-shaped. A prompt can request sensitive work without containing obvious PII patterns. Security systems need to understand what users and agents are trying to do, not just scan for keywords.

- Use context-aware classification for non-deterministic systems. Generative AI outputs vary, which makes signature-style enforcement inconsistent. Intent-based classification evaluates purpose and context rather than relying on the same strings showing up every time.

- Enforce with nuance, not binaries. Graduated enforcement, proportionate to risk, such as “allow, warn, block, or route with data tokenization and rehydration,” can drive responsible use of AI rather than a blunt allow/block posture that stalls adoption.

When policies reflect intent, you can keep legitimate work moving while still stopping the interactions that create unnecessary exposure.

3. Runtime Defense for Prompts, Responses, and Tool Calls

Once an agent has made a tool call, the impact can be immediate and hard to roll back. That’s why runtime defense needs to operate before execution, not after.

A practical runtime defense model includes:

- Pre-execution protection that scans prompts before agents process them, detects injection attempts, and applies data tokenization so PII and credentials never reach third-party models.

- Response protection that inspects outputs before delivery, filtering harmful or non-compliant content.

- Tool-call defense and attribution that extends inspection to the structured actions agents take through connected tools.

WitnessAI implements this bidirectional architecture with single-tenant deployment, customer-controlled encryption (BYOK), and SOC 2 Type II compliance.

The Security Architecture That Enables the Agent Era

Generative AI and agentic AI aren’t competing categories; agentic AI is built on generative AI, but security designed for one doesn’t cover the other.

Content filtering and prompt monitoring addressed the first wave of enterprise AI adoption. Today, protecting the entire digital workforce demands visibility into tool calls and MCP servers, intelligent policies that understand autonomous behavior, runtime defense that operates bidirectionally at machine speed, and identity attribution that traces every agent action back to its human origin.

We built WitnessAI as a unified AI enablement platform to give security and AI teams a shared framework to move from AI hesitation to AI confidence, with intent-based policies, bidirectional visibility, and runtime guardrails that protect the entire digital workforce at scale.

The organizations that will get AI adoption right won’t choose between innovation and security. They’ll find a way to prove to regulators, boards, and customers that both are possible, and use that proof to move faster than everyone else.