What is AI Runtime Security?

AI runtime security is the discipline of protecting artificial intelligence (AI) models and applications during live execution. Unlike training or deployment security, runtime security focuses on the moment when AI systems are actively running, processing inputs, and generating outputs.

As organizations increasingly adopt large language models (LLMs), AI agents, and generative AI (GenAI) applications, runtime security has become a critical requirement. Attackers now target the live execution environment—where AI interacts with users, APIs, and sensitive data—because this is where the most valuable information and vulnerabilities exist.

Runtime protection ensures that AI workloads, whether in cloud-native environments like AWS or Kubernetes, or on-premises, remain safe against exploitation, malicious inputs, and real-time data leakage.

What Does Runtime Security Mean?

In traditional cybersecurity, runtime security means protecting systems, applications, and workloads as they run. For AI, this concept extends to model behavior and AI-powered workflows.

Key aspects of runtime security for AI include:

- Runtime monitoring: Continuous observability of inputs, outputs, APIs, and workloads.

- Policy enforcement: Applying rules in real-time to block unsafe requests or responses.

- Threat detection: Identifying unusual patterns, adversarial prompts, or anomalous data access.

- Remediation: Taking immediate action to stop or limit the impact of an attack.

Runtime protection ensures that even if vulnerabilities exist in code, datasets, or models, attackers cannot exploit them during execution.

What are Common Threats to AI Runtime Security?

AI runtime environments face unique and evolving threats that traditional defenses cannot fully address:

- Prompt Injection Attacks

Malicious users craft prompts designed to override LLM safeguards and trick models into leaking sensitive data or executing restricted actions. - Data Leakage

Unintended exposure of personally identifiable information (PII), proprietary datasets, or customer data through model outputs. - Adversarial Attacks

Subtle manipulations of input data to cause machine learning models to misclassify, malfunction, or generate harmful outputs. - API Exploitation

Attackers abuse AI endpoints by injecting malicious requests, escalating privileges, or exfiltrating data. - Insider Misuse

Authorized users with excessive permissions misuse access to extract sensitive information or manipulate outcomes. - Malware in Workflows

AI-powered applications integrated with external workflows may execute malicious scripts injected into prompts, plugins, or connected systems.

These threats underscore the importance of embedding runtime monitoring and observability directly into the AI lifecycle.

How Can AI Runtime Security Protect Against Real-Time Threats?

AI runtime security is specifically designed to address real-time attacks that occur during execution. It protects AI apps, LLMs, and endpoints by:

- Monitoring Inputs and Outputs

- Scanning user prompts and model outputs for malicious patterns, policy violations, or sensitive data.

- Dynamic Access Controls

- Enforcing granular permissions and blocking suspicious actions.

- Runtime Observability

- Tracking behavior of AI models, workloads, and connected APIs in real-time.

- Automated Mitigation

- Using AI-powered security solutions to shut down attacks instantly—before they cause damage.

- Cloud-Native Security

- Integrating runtime protection into AWS, Kubernetes, and on-premises deployments for consistent coverage.

This real-time protection ensures that AI threats are neutralized at the point of impact, minimizing business disruption.

How Can AI Runtime Security Help Prevent Data Breaches?

AI systems often handle high-value datasets across industries such as finance, healthcare, and government. Without runtime security, the risk of data leakage is high, whether through malicious prompt injection or unintended outputs.

Runtime protection reduces the likelihood of breaches by:

- Detecting and blocking sensitive data in outputs before they are exposed.

- Monitoring API calls and data flows across cloud-native workloads.

- Applying security policies that comply with frameworks like OWASP AI Security.

- Preventing unauthorized access to datasets, endpoints, and downstream applications.

For enterprises, AI runtime security strengthens data protection initiatives, reduces regulatory risk, and ensures customer trust.

How Can AI Runtime Security Help Prevent Malicious Attacks on Machine Learning Models?

Machine learning models deployed in production are frequent targets for adversaries seeking to compromise accuracy or exploit weaknesses. Runtime protection helps organizations defend against these threats by:

- Adversarial Detection – Identifying inputs designed to trick models into making incorrect predictions.

- Runtime Monitoring of Model Behavior – Detecting unusual drift, hallucinations, or compromised outputs.

- Red Teaming Exercises – Stress-testing AI systems against AI-specific vulnerabilities like prompt injection, backdoors, and data poisoning.

- Automated Remediation – Blocking malicious requests and retraining with sanitized datasets where necessary.

This approach ensures that AI-powered applications remain reliable, safe, and aligned with intended business functions.

AI Runtime Security in Cloud-Native Environments (AWS & Kubernetes)

Most enterprise AI applications today run in cloud-native ecosystems, making runtime security across distributed environments critical.

- AWS Security Integration

AI runtime security tools can be integrated into AWS workloads to monitor AI agents, LLMs, and APIs in real-time. This ensures both network security and data protection across workloads. - Kubernetes and Containerized AI

Many AI apps run in Kubernetes clusters, where microservices and endpoints are exposed. Runtime security here involves monitoring pods, scanning API traffic, and applying runtime protection against threats at the container level. - Hybrid and On-Premises Deployments

For industries requiring on-premises AI systems (e.g., defense, healthcare), runtime monitoring tools enforce security posture across mixed environments.

These integrations ensure that runtime protection scales across all forms of AI deployment.

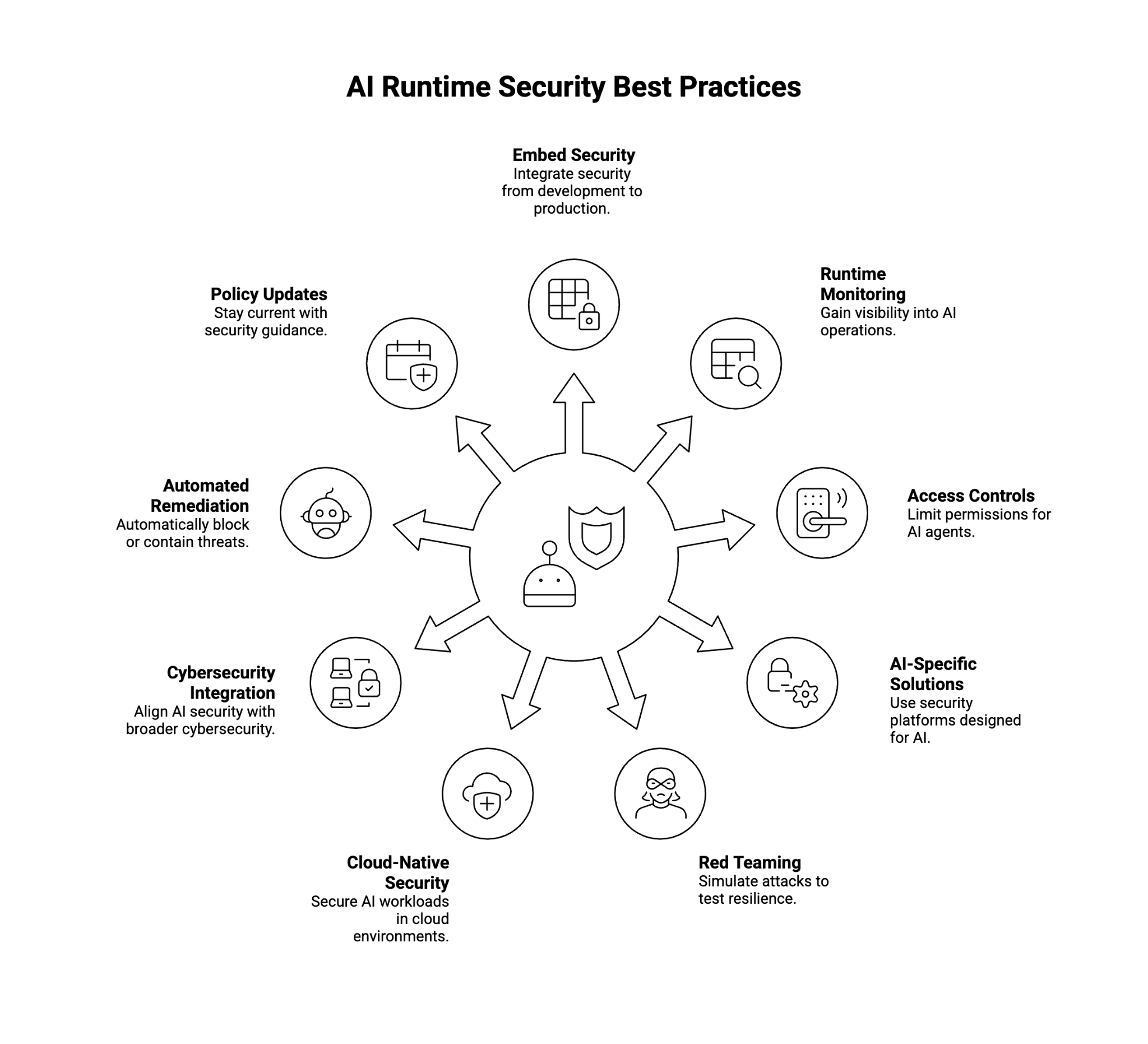

AI Runtime Security Best Practices

To effectively defend against AI threats, organizations should adopt the following runtime security best practices:

- Embed Security Throughout the AI Lifecycle

- Treat runtime protection as part of the model lifecycle, from development to production.

- Use Runtime Monitoring and Observability Tools

- Gain visibility into inputs, outputs, APIs, and workloads.

- Apply Strong Access Controls

- Limit permissions and enforce least-privilege policies for all AI agents and endpoints.

- Deploy AI-Specific Runtime Protection Solutions

- Use security platforms built for AI instead of relying only on traditional firewalls.

- Conduct AI Red Teaming and Stress Testing

- Simulate prompt injection and adversarial attacks to validate resilience.

- Adopt Cloud-Native Runtime Security Practices

- Monitor AWS workloads, secure Kubernetes clusters, and enforce runtime protection across multi-cloud environments.

- Integrate with Broader Cybersecurity Ecosystem

- Align AI runtime security with network security, cloud security, and endpoint protection for defense-in-depth.

- Enable Automated Remediation

- Use AI-powered security solutions that can automatically block or contain runtime threats.

- Regularly Update Security Policies

- Stay aligned with evolving OWASP AI Security guidance and industry regulations.

By following these steps, enterprises can build a robust defense against runtime-specific vulnerabilities in the AI ecosystem.

Conclusion

As enterprises accelerate adoption of AI applications, LLMs, and generative AI use cases, runtime security is becoming a foundational layer of protection. Without it, organizations remain vulnerable to real-time attacks, prompt injection exploits, and data breaches that compromise trust and compliance.

With strong runtime protection, observability, and cloud-native security solutions, organizations can ensure AI systems remain safe, resilient, and aligned with business goals. By embedding AI runtime security best practices into the lifecycle, enterprises gain confidence in deploying AI responsibly at scale.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.