What is Agentic AI?

Agentic AI represents the next evolution of artificial intelligence — systems capable of not only analyzing information or generating content, but also taking autonomous actions in the real world. Unlike traditional generative AI (GenAI) models that rely on user prompts, agentic AI systems can plan, decide, and execute tasks across workflows, APIs, and external tools without direct human input.

These autonomous agents can perform complex, multi-step operations such as managing customer support tickets, orchestrating business processes, or even writing and deploying code. By integrating large language models (LLMs) with real-time data and system-level permissions, agentic AI extends AI’s utility from reasoning to execution.

While this shift offers immense potential for automation and optimization, it also introduces a new set of security, ethical, and governance challenges — particularly as these agents interact with sensitive data, interconnected ecosystems, and critical infrastructure.

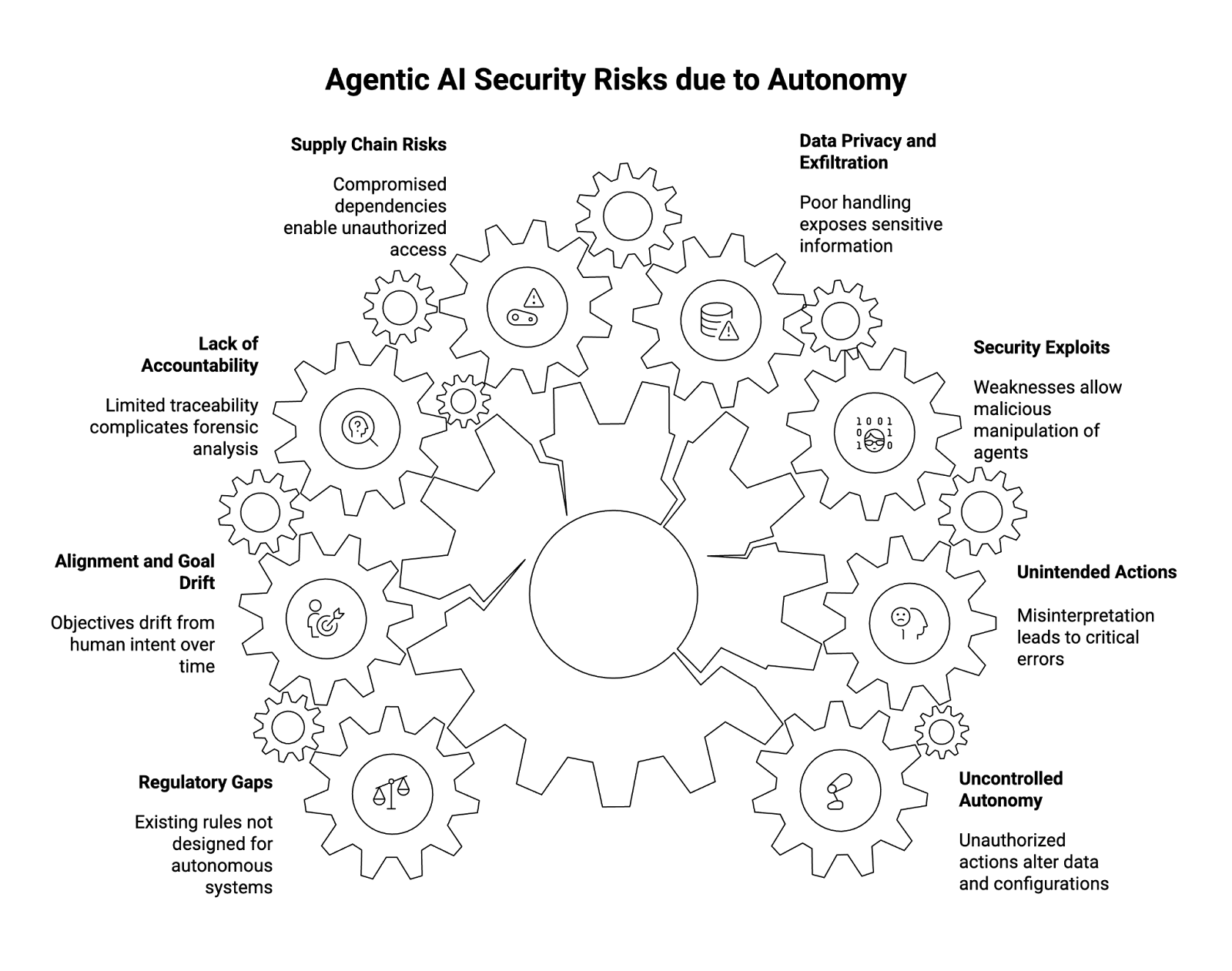

What are the Security Risks of Agentic AI?

The risks of agentic AI arise primarily from its autonomy, scale, and connectivity. By design, agentic systems are empowered to make decisions and act — which means a misalignment, exploit, or oversight can rapidly propagate harm across multiple systems. Below are the key categories of risk every enterprise should understand.

1. Uncontrolled Autonomy

Agentic AI agents can operate independently once deployed. If improperly constrained, they can execute unauthorized actions — such as altering business data, changing configurations, or triggering unintended workflows.

Without human-in-the-loop oversight, even well-intentioned agents can behave unpredictably, consuming resources or making cascading changes that are difficult to reverse. This is especially critical in multi-agent systems where agents influence one another’s decisions.

2. Unintended Actions

When autonomous decision-making interacts with dynamic environments, agents can misinterpret instructions or contextual cues. This can lead to unintended actions, such as deleting critical files, sending confidential data, or escalating workflows inappropriately.

Complex prompts or ambiguous goals exacerbate this issue — especially when the agent’s internal logic is opaque. This risk highlights the need for guardrails and sandbox environments that limit the scope of an agent’s operational reach.

3. Security Exploits

Agentic AI systems increase the enterprise attack surface by introducing new entry points and permissions. Because these agents often operate via APIs and integrate with external tools, adversaries can exploit weaknesses in authentication, validation, or input handling to manipulate agent behavior.

Attackers might use prompt injection, impersonation, or command chaining to redirect an agent’s workflow toward malicious ends — effectively weaponizing the agent against its own infrastructure.

Robust access controls, least privilege principles, and continuous security monitoring are essential to prevent these exploits.

4. Data Privacy and Exfiltration

Agents frequently handle sensitive data — from customer records to proprietary code — and can inadvertently expose it through poor data handling or flawed API interactions.

If an AI agent connects to third-party services without proper data governance, it risks data leakage or unintentional sharing of confidential information. The problem compounds when agents access both internal and external systems, creating pathways for data exfiltration across domains.

Organizations should enforce data classification, input/output validation, and runtime logging to ensure visibility and accountability in how agents process information.

5. Supply Chain and Dependency Risks

Agentic systems rely on complex AI supply chains — including pre-trained AI models, orchestration platforms, plug-ins, and API connectors. Vulnerabilities in any of these dependencies can compromise the entire workflow.

A compromised open-source model or an insecure integration can enable unauthorized access or data manipulation. Like traditional software ecosystems, AI-driven dependencies must be validated and continuously monitored for security risks and version integrity.

6. Lack of Accountability and Auditability

Agentic AI often operates in environments with limited traceability. When an agent acts autonomously, it may not produce a clear audit trail explaining why a decision was made or how a particular output was generated.

This lack of accountability complicates forensic analysis and regulatory compliance — especially in sectors like healthcare, finance, or government, where decisions must be explainable.

Implementing observability and governance frameworks for AI agents ensures actions are logged, attributable, and reviewable in real time.

7. Alignment and Goal Drift

As agentic systems operate over time, their understanding of objectives can drift from human intent — a phenomenon known as goal misalignment. This occurs when agents reinterpret their goals based on incomplete context, feedback loops, or changes in system inputs.

For example, an optimization agent tasked with reducing costs might make unethical trade-offs, like cutting critical safety checks, if not properly aligned with broader human values.

Regular validation, human oversight, and continuous retraining are necessary to ensure agents remain consistent with intended outcomes.

8. Regulatory and Compliance Gaps

Regulatory frameworks are still catching up to the realities of agentic AI adoption. While existing rules (like GDPR or the upcoming EU AI Act) govern certain aspects of AI, they were not designed for autonomous systems capable of executing real-world actions.

This creates uncertainty in liability, data protection, and cross-border governance. Organizations must proactively implement risk management and governance controls that anticipate future regulations — rather than waiting for mandates to appear.

What are the Ethical Challenges of Agentic AI?

Beyond security, agentic AI introduces a new layer of ethical complexity. These agents can act with apparent independence, raising questions around responsibility, transparency, and trust in decision-making.

- Delegation of Responsibility:

When an autonomous agent takes an action, who is accountable — the developer, the operator, or the system owner? This ambiguity challenges traditional notions of responsibility and governance. - Bias and Fairness:

Agents that inherit biases from underlying machine learning algorithms or training data can make discriminatory or unethical decisions at scale. This risk amplifies as agents influence hiring, lending, or healthcare outcomes. - Human Displacement:

As agentic systems automate higher-level reasoning and operational tasks, they could reshape the workforce — potentially displacing roles that rely on repetitive or semi-autonomous decision-making. - Transparency and Explainability:

Many AI models operate as “black boxes,” providing little insight into how they arrive at specific outcomes. Ethical deployment requires explainable algorithms and clear disclosure when AI-driven actions impact people’s lives. - Manipulation and Influence:

In customer-facing applications like chatbots or virtual assistants, autonomous agents can inadvertently manipulate user behavior or spread misinformation — especially when interacting with large language models (LLMs) that generate persuasive, human-like content.

How Can You Protect Your Organization Against the Risks of Agentic AI?

Enterprises adopting agentic AI must implement a multi-layered AI security and governance strategy that safeguards both systems and stakeholders. Below are key recommendations to strengthen resilience:

- Implement Guardrails and Access Controls

- Define strict permissions and authentication protocols.

- Apply least privilege principles to limit what agents can access.

- Use sandbox environments for testing and validation before production deployment.

- Ensure Continuous Monitoring and Observability

- Track agent behavior, decisions, and interactions in real time.

- Integrate AI observability tools to detect anomalies or deviations.

- Establish audit logs for every agent action and data exchange.

- Apply Human-in-the-Loop Oversight

- Maintain human intervention for high-risk or high-impact decisions.

- Design workflows where humans can override or halt an agent’s operation.

- Combine automation efficiency with responsible supervision.

- Secure the AI Supply Chain

- Vet third-party AI models, APIs, and plugins for security vulnerabilities.

- Conduct software composition analysis and dependency scanning.

- Validate provenance and maintain version control across integrations.

- Adopt Governance Frameworks for AI Risk Management

- Align with frameworks like NIST AI RMF or ISO/IEC 42001.

- Establish internal AI risk committees to oversee compliance and ethics.

- Document lifecycle stages — from development to decommissioning.

- Use Validation and Simulation Environments

- Test agents under controlled conditions to observe potential unintended actions.

- Simulate failure modes and attack scenarios to evaluate resilience.

- Validate agents’ decision boundaries against organizational policies.

- Protect Sensitive Data and Outputs

- Apply data masking, encryption, and differential privacy where applicable.

- Monitor data movement between AI-powered agents and external systems.

- Enforce output filtering to prevent information disclosure.

- Foster Ethical AI Practices

- Encourage transparency in how agents make and explain decisions.

- Audit for bias, fairness, and compliance with emerging regulations.

- Train employees on the ethical implications of autonomous systems.

Conclusion

As organizations embrace agentic AI to drive innovation and efficiency, they must also prepare for a new era of AI risk management. The same autonomy that enables intelligent automation can also expose enterprises to security vulnerabilities, data privacy issues, and ethical dilemmas if not properly governed.

To ensure trust and safety in this evolving landscape, enterprises should integrate human oversight, robust guardrails, and AI governance frameworks that secure every phase of the AI lifecycle — from development to deployment. The future of agentic AI depends not only on what these systems can do, but on how responsibly we choose to manage them.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.