As artificial intelligence (AI) becomes embedded in enterprise workflows, cybersecurity teams must adapt to defend against unique AI-specific risks. AI threat modeling—a structured methodology to identify, evaluate, and mitigate security threats in AI and machine learning (ML) systems—has emerged as a core component of AI security strategies.

This article explains what AI threat modeling is, how it supports risk mitigation, and what strategies and best practices security teams should adopt to protect AI systems across their lifecycle.

What Is AI Threat Modeling?

AI threat modeling is the process of systematically identifying, analyzing, and mitigating potential threats that target artificial intelligence systems. It builds upon traditional threat modeling techniques used in software development (e.g., STRIDE, PASTA, or MITRE’s ATT&CK framework) but adapts them for the unique attack surfaces, data flows, and model-specific risks found in machine learning and generative AI.

At its core, AI threat modeling involves:

- Understanding how an AI system operates, including data pipelines, model architecture, and outputs.

- Identifying vulnerabilities specific to AI components, such as model inversion, adversarial attacks, and data poisoning.

- Mapping the threat landscape associated with AI capabilities like large language models (LLMs) or generative AI tools.

- Creating a risk mitigation plan that is aligned with business goals, compliance requirements, and operational workflows.

It serves as both a proactive defense mechanism and a strategic decision-making tool for AI security.

How Can AI Threat Modeling Help Mitigate Risks in Machine Learning Systems?

Machine learning systems introduce a wide range of security threats not present in traditional software. These include model exploitation, poisoned training data, unauthorized inference, and leakage of sensitive information through outputs.

Threat modeling offers a structured approach to manage these risks:

1. Early Identification of Specific Threats

- Enables teams to prioritize vulnerabilities during development rather than react post-deployment.

- Helps detect adversarial examples, information disclosure, and authentication gaps before attackers can exploit them.

2. Tailored Mitigation Strategies

- For instance, a threat model may recommend access controls for LLM APIs to prevent abuse of output capabilities.

- Strategies such as input validation, rate limiting, and differential privacy can be defined in advance.

3. Resilience Across the AI Lifecycle

- From model training to real-time deployment, threat modeling supports ongoing validation of AI systems.

- This is essential to address evolving threats and supply chain dependencies in open-source models.

4. Aligning Security with Business Risk

- AI threat modeling connects technical threats to business impacts—such as denial of service (DoS) affecting critical automation, or model drift impacting decision-making in healthcare or finance.

By aligning threats with remediation plans, organizations can optimize their security posture and minimize long-term risk.

How Can AI Threat Modeling Improve the Security of AI Systems?

While traditional application security focuses on code vulnerabilities, AI threat modeling expands the focus to include:

- Data-centric risks: e.g., poisoning of training data, exposure of datasets, or malicious data flow manipulation.

- Model-centric risks: e.g., fine-tuning attacks, prompt injection, or the generation of harmful content via genAI outputs.

- Infrastructure risks: e.g., exposure of APIs, insecure model hosting on AWS, Azure, or GitHub.

In doing so, threat modeling enables:

- Holistic AI security across model inputs, processing, and outputs.

- Better visibility into workflow automation, especially where AI powers decision-making.

- Prevention of real-world breaches, where threat actors exploit AI systems for unauthorized access, misinformation, or data exfiltration.

Incorporating threat intelligence from sources such as MITRE, OWASP, and Microsoft’s threat modeling tools enhances situational awareness and allows teams to stay ahead of new threats.

What Strategies Are Effective in AI Threat Modeling?

Effective AI threat modeling requires a multi-pronged approach that balances methodology, tooling, and expertise. Key strategies include:

1. Adapt STRIDE for AI

The STRIDE model (Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service, Elevation of Privilege) can be applied to AI contexts by:

- Examining tampering of training data (e.g., poisoned datasets).

- Assessing risks of information disclosure through model outputs.

- Preventing denial of service on AI inference endpoints.

2. Use Attack Trees and AI-Specific Scenarios

- Develop attack trees focused on adversarial attacks, model evasion, or LLM misuse.

- Simulate scenarios like prompt injection in GPT-powered applications to test defenses.

3. Focus on Data and Model Flow Mapping

- Map all data flows from ingestion to output.

- Document dependencies across open-source, proprietary, and third-party AI tools.

4. Integrate Threat Modeling into DevSecOps Workflows

- Incorporate AI threat modeling into CI/CD pipelines and model development lifecycles.

- Leverage automation to identify potential threats during model updates or retraining.

5. Evaluate AI Capabilities and Limitations

- Analyze the functionality and outputs of the AI system: What is it allowed to do? What safeguards are missing?

- Determine whether a capability like auto-completion or decision recommendation can be weaponized.

These strategies provide both proactive insight and defensive structure, forming the backbone of a secure AI deployment.

Who Should Be Involved in AI Threat Modeling?

AI threat modeling is a cross-functional discipline. Effective execution involves a variety of stakeholders:

| Role | Responsibilities in Threat Modeling |

| Security Teams | Lead the threat modeling process, define mitigation tactics, monitor ongoing threats. |

| AI/ML Engineers | Provide insight into model training, architecture, and data flow. |

| Product Owners | Prioritize risk tolerance and define acceptable behavior for AI functionalities. |

| Compliance Officers | Ensure regulatory alignment with frameworks like NIST, OWASP, and industry-specific standards. |

| Developers | Implement technical controls, authentication protocols, and access management mechanisms. |

Collaboration ensures coverage of AI-specific attack vectors while aligning technical risks with business impact.

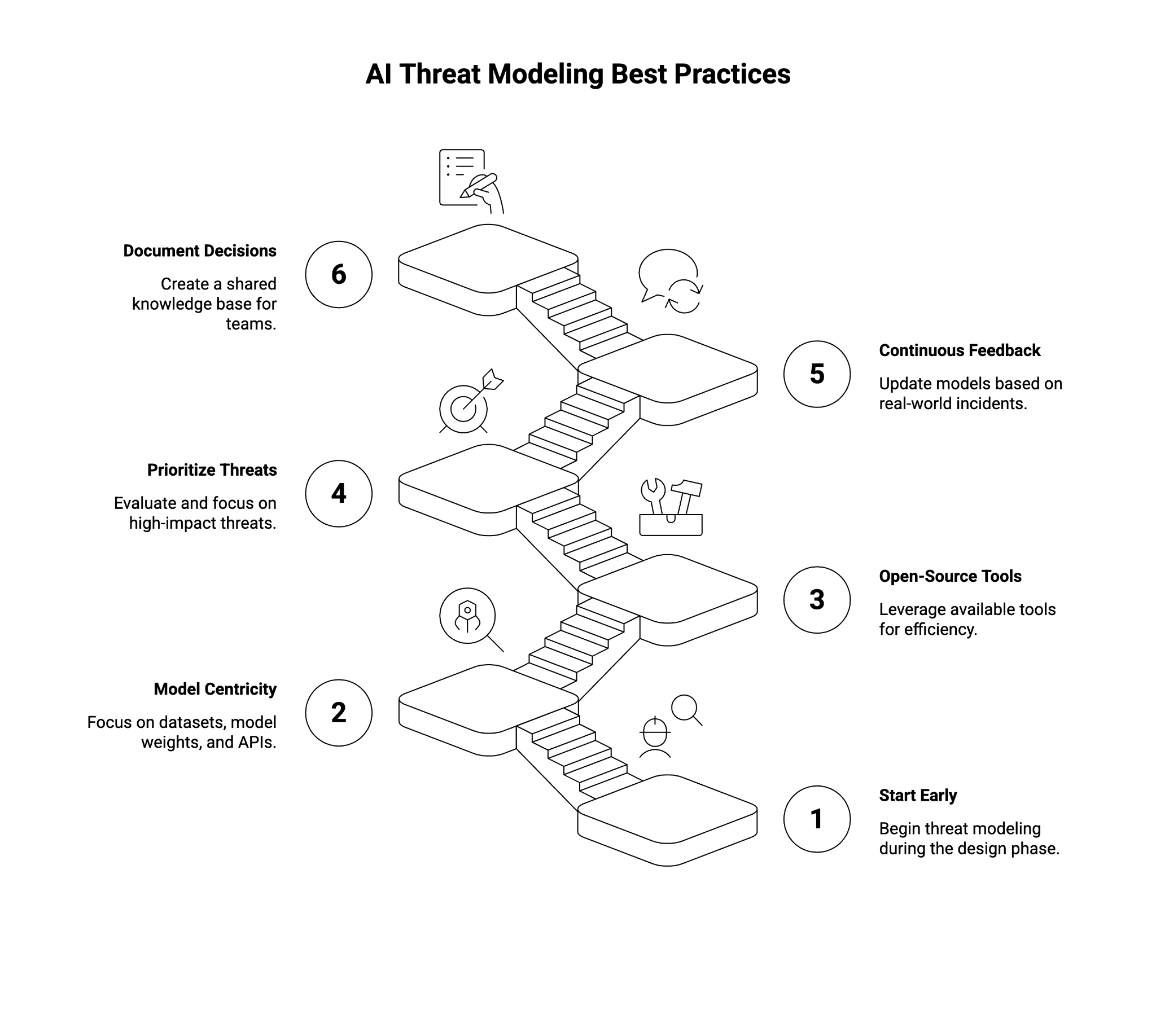

What Are the AI Threat Modeling Best Practices?

To maximize effectiveness, organizations should follow these best practices:

1. Start Early and Repeat Often

- Begin threat modeling during the design phase of AI systems.

- Revisit models regularly as new capabilities or dependencies are introduced.

2. Focus on Model and Data Centricity

- Include datasets, model weights, and inference APIs as first-class entities in the threat model.

- Account for data provenance, integrity, and access patterns.

3. Use Open-Source Tools Where Applicable

- Leverage tools like Microsoft’s Threat Modeling Tool, OWASP AI Security and Privacy Guide, and MITRE’s AI Use Case Framework.

- Integrate GitHub-hosted checklists or templates to streamline evaluations.

4. Prioritize Based on Impact and Likelihood

- Not all threats are equal. Use a risk matrix to evaluate severity and likelihood.

- Focus resources on high-impact, high-likelihood threats such as sensitive data exposure or unauthorized output generation.

5. Incorporate Continuous Feedback Loops

- Update threat models based on real-world incidents, user feedback, or threat intelligence.

- Align with adaptive security principles to handle evolving threats.

6. Document Assumptions and Decisions

- Create a shared knowledge base of identified threats, decision-making rationale, and mitigation strategies.

- Ensure that documentation is accessible across software development, AI, and security teams.

When done correctly, AI threat modeling not only reduces security risks but also improves the trustworthiness, robustness, and resilience of AI deployments.

Final Thoughts

As AI-powered systems become critical to business operations, the need for structured AI threat modeling is more urgent than ever. With threats ranging from adversarial attacks to output manipulation in generative AI, traditional application security methods fall short. A dedicated threat modeling approach—grounded in AI functionality, stakeholder collaboration, and proactive mitigation—enables organizations to defend AI assets in a complex, evolving landscape.

From early design to real-time deployment, threat modeling equips enterprises to build secure, reliable, and trustworthy AI systems.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.