Artificial intelligence has transformed how organizations build software, automate workflows, and analyze data. At the same time, it has fundamentally changed the cyber threat landscape. Today, attackers increasingly use AI to scale cybercrime, automate reconnaissance, and bypass traditional defenses. This evolution has given rise to a new class of threats collectively referred to as AI hacking.

This article provides a structured, security-focused overview of AI hacking, how threat actors use AI in real-world attacks, and what the business implications are for modern enterprises.

What Is AI Hacking?

AI hacking refers to the use of artificial intelligence, machine learning, and generative AI to carry out, enhance, or automate cyberattacks. Unlike traditional hacking, which often relies on manual techniques and static scripts, AI hacking leverages AI models, large language models (LLMs), and adaptive algorithms to operate at scale and in real time.

AI-enabled attacks can:

- Analyze large datasets to identify vulnerabilities

- Automate phishing and social engineering campaigns

- Generate malware variants that evade antivirus tools

- Adapt attack strategies dynamically based on defenses encountered

In practice, AI hacking expands the attack surface of AI systems and traditional IT environments alike, introducing new vulnerabilities across the AI lifecycle—from training data to runtime inference.

What Is an AI Hacker?

An AI hacker is a threat actor who intentionally uses AI tools, AI-based automation, or generative models to conduct cybercrime. These individuals may be highly skilled security researchers turned adversaries, organized cybercriminal groups, or opportunistic scammers leveraging open-source AI tools.

AI hackers are not always building models from scratch. Many rely on:

- Open-source machine learning frameworks

- Publicly available datasets

- AI-powered chatbots and APIs

- Commercial generative AI platforms such as OpenAI and Anthropic

By combining human operators with automated AI agents, AI hackers can dramatically increase the speed, volume, and sophistication of cyberattacks.

How Is AI Used by Cybercriminals?

AI is now embedded across multiple stages of the cybercrime lifecycle—from reconnaissance to exploitation and monetization.

Phishing and Social Engineering

AI-driven phishing campaigns are far more convincing than traditional scams. Using large language models, cybercriminals can generate personalized phishing messages that mimic writing styles, corporate tone, and even internal communications.

Common AI-enabled phishing techniques include:

- Highly targeted spear-phishing emails

- AI chatbots impersonating customer support

- Social media scams tailored using scraped profile data

- Prompt injection attacks against enterprise chatbots

These attacks significantly increase success rates by exploiting human trust and psychological manipulation.

Vulnerability Discovery and Exploitation

Machine learning models can rapidly scan codebases, APIs, and exposed services to identify potential weaknesses. AI-powered tools can:

- Analyze software repositories for insecure functions

- Correlate threat intelligence feeds to predict exploitable flaws

- Automate proof-of-concept exploit generation

This reduces the time between vulnerability disclosure and active exploitation, putting defenders at a disadvantage.

Malware and Ransomware

Generative AI is increasingly used to produce AI-generated malware and ransomware variants. These tools can:

- Rewrite malicious code to evade signature-based antivirus

- Automatically test payloads against detection engines

- Customize ransomware for specific environments

As a result, AI-driven malware adapts faster than traditional defensive controls.

Types of AI Hacking

AI hacking manifests across several distinct but overlapping attack categories.

AI Deepfake

Deepfakes use generative AI to create realistic fake video or audio content. Attackers leverage deepfakes for executive impersonation, financial fraud, and social engineering attacks.

AI-Generated Malware

Malware written or modified by AI models can change structure dynamically, making detection and reverse engineering more difficult for security teams.

AI Social Engineering

AI-enhanced social engineering campaigns analyze social media and public data to craft highly believable scams targeting individuals or enterprises.

AI Brute Force Attacks

Machine learning models optimize credential stuffing and password guessing by prioritizing likely combinations based on historical breach data.

AI Phishing

AI phishing automates message generation, localization, and adaptation, allowing attackers to launch large-scale campaigns with minimal effort.

CAPTCHA Cracking

AI-based computer vision models can defeat CAPTCHA systems designed to block bots, enabling automated account creation and abuse.

Voice Cloning

Voice cloning attacks replicate a person’s voice using short audio samples, enabling fraudulent phone calls that bypass human verification.

Keystroke Listening

AI models can infer keystrokes using audio signals or motion data, posing risks to sensitive data and authentication mechanisms.

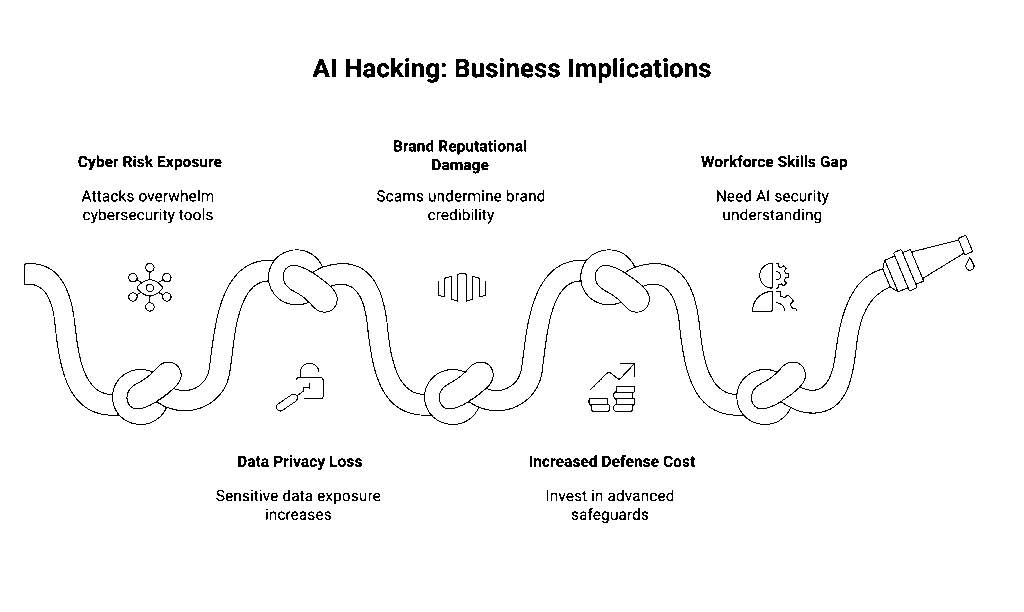

What Are the Business Implications of AI Hacking?

AI hacking introduces material risks across security, compliance, and operational resilience.

Expanded Cyber Risk Exposure

AI-powered attacks increase the frequency and severity of cyberattacks, overwhelming traditional cybersecurity tools that rely on static rules and signatures.

Data Privacy and Sensitive Information Loss

AI-driven phishing and malware increase the likelihood of sensitive data exposure, impacting customer trust and regulatory compliance.

Brand and Reputational Damage

Deepfake-enabled scams and social engineering attacks can directly undermine brand credibility, especially when executives or employees are impersonated.

Increased Cost of Defense

Organizations must invest in advanced safeguards, including AI security controls, real-time monitoring, and continuous threat intelligence to keep pace with AI hackers.

Workforce and Skills Gap

Cybersecurity professionals must now understand both traditional security and AI system risks, including prompt injection, jailbreaking, and model misuse.

Securing Organizations Against AI Hacking

To counter AI-enabled threats, enterprises must evolve their security posture by:

- Implementing AI-specific safeguards and guardrails

- Monitoring AI agent behavior in real time

- Securing APIs, datasets, and training data

- Applying least-privilege access across AI systems

- Integrating AI threat intelligence into incident response workflows

AI hacking is not a future risk—it is a present reality. Organizations that treat AI security as a core part of their cybersecurity strategy will be best positioned to defend against the next generation of cyber threats.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.