What is AI Agent Security?

AI agent security refers to the practices, frameworks, and controls designed to protect AI agents—autonomous or semi-autonomous systems built on large language models (LLMs) and other AI models—from misuse, vulnerabilities, and adversarial attacks. Unlike standalone AI systems, agents interact with multiple tools, APIs, and workflows, making them a prime target for cyber threats.

Securing AI agents involves protecting their functions, agent actions, data flows, and decision-making processes. This requires not only addressing traditional AI security risks (e.g., data leakage, adversarial inputs) but also extending defenses into areas unique to agentic AI, such tool manipulation and unauthorized access to enterprise endpoints.

Why AI Agents Pose Different Security Challenges

The security landscape for AI agents is significantly broader than for traditional chatbots or single-purpose LLM applications because agents combine reasoning, autonomy, and execution.

Chatbots

Chatbots primarily focus on conversational interaction and content generation. Their attack surface is typically limited to user prompts and model outputs. While still vulnerable to threats like prompt injection, phishing attempts, or accidental data leakage, chatbots usually lack direct access to enterprise systems or the ability to take autonomous action.

AI Agents

Agentic AI systems introduce a fundamentally different risk profile. They can:

- Execute workflows across multiple APIs and enterprise tools

- Make autonomous decisions about agent actions without human approval

- Access sensitive data, credentials, and internal systems

- Interact directly with real-world business processes and endpoints

This expanded functionality dramatically increases the attack surface. A compromised AI agent may not just generate unsafe output—it could trigger unauthorized API calls, modify records, exfiltrate sensitive data, or perform actions that propagate laterally across systems.

As autonomy increases, traditional application security controls become insufficient on their own. AI agents must be treated as active digital actors within the enterprise security model.

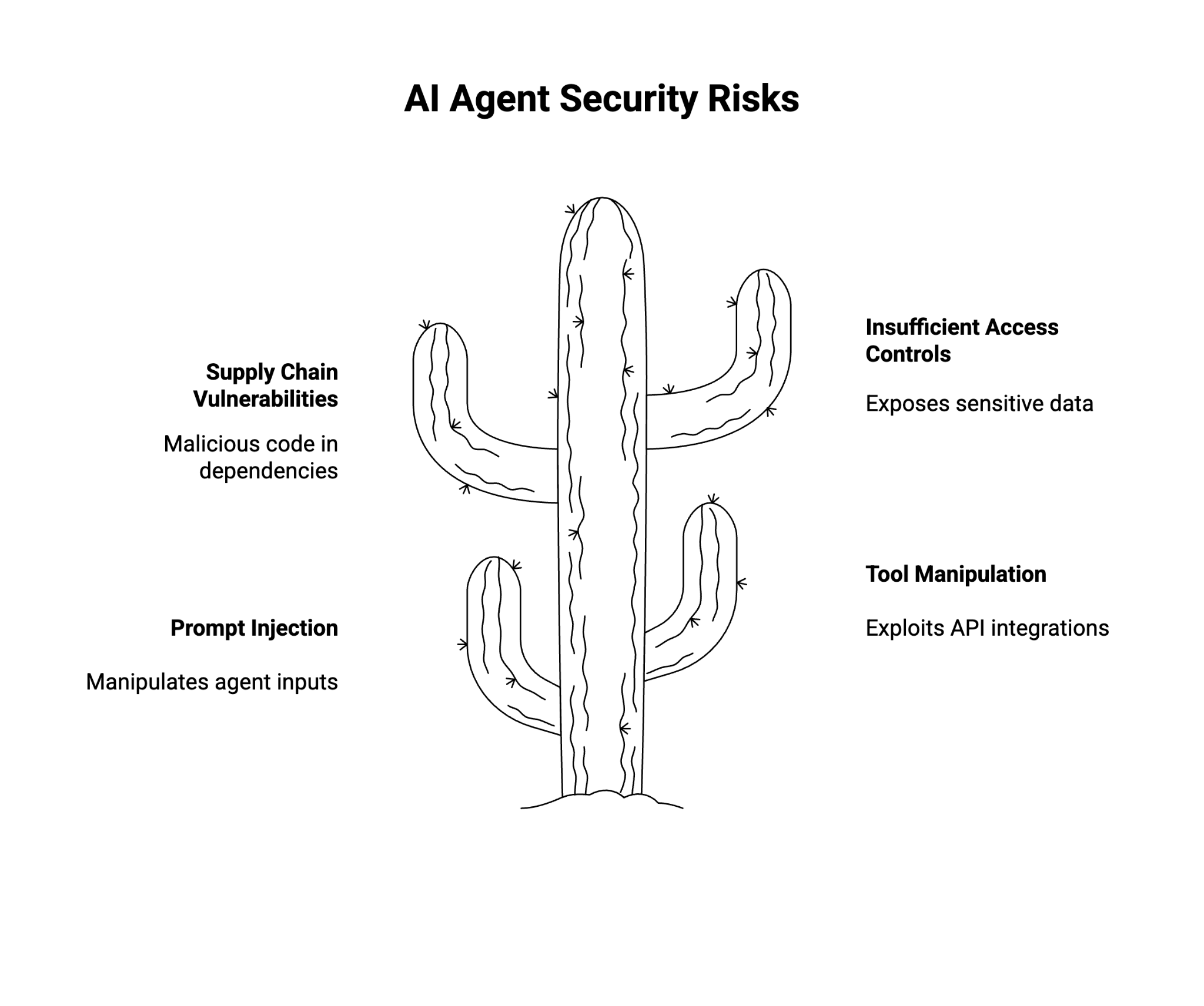

The Top Security Risks Facing AI Agents

1. Prompt Injection and Agent Hijacking

Prompt injection attacks manipulate agent inputs to override intended instructions. Malicious adversarial inputs may cause agents to exfiltrate sensitive information, reveal credentials, or execute unauthorized workflows. In severe cases, attackers can hijack agent behavior and control downstream decisions.

2. Tool Manipulation and Excessive Permissions

AI agents often rely on APIs or plugins to extend functionality. Poorly scoped permissions or weak authentication can allow unauthorized users or malicious actors to exploit these integrations. This creates risks such as unauthorized data access, workflow disruption, or denial of service attacks.

3. Supply Chain Vulnerabilities

Agent frameworks and open-source libraries power much of today’s agentic AI. While these accelerate adoption, they also introduce supply chain vulnerabilities. Attackers can inject malicious code, manipulate dependencies, or exploit unpatched libraries to compromise secure AI agents.

4. Insufficient Access Controls and Monitoring

Without strong access controls and continuous runtime monitoring, AI agents may unintentionally expose sensitive data, fail to enforce zero-trust principles, or become an entry point for broader system compromise. Weak authentication mechanisms also increase the risk of unauthorized user access.

IDC Innovators: Security for Agentic AI, 2025

This IDC Innovators presentation highlights emerging vendors addressing the security challenges of agentic AI

Download NowHow Can I Detect Risky AI Agent Behavior in Real Time?

Detecting agent behavior anomalies requires proactive, real-time monitoring. Enterprises should deploy:

- Runtime observability tools to track agent actions, API calls, and outputs.

- Threat detection frameworks that flag unusual behavior such as privilege escalation, data leakage, or abnormal automation loops.

- Guardrails around agent decision-making to prevent unauthorized workflows or excessive credential use.

- Incident response integration that can immediately quarantine compromised AI agents before risks spread laterally.

By treating AI agents as active workloads within the cybersecurity ecosystem, enterprises can build visibility into real-world agent workflows and respond before risks escalate.

How Can I Ensure the Security and Privacy of Data Processed by AI Agents?

Because AI agents often process sensitive information, data security and privacy controls must be embedded throughout the agent lifecycle.

Key safeguards include:

- Encryption at rest and in transit to protect agent inputs, outputs, and intermediate data

- Granular access controls that limit exposure to only required data fields and services

- Zero-trust authentication to verify every user, agent, and endpoint interaction

- Data minimization to prevent unnecessary collection, retention, or propagation of sensitive information

- Auditable logging and metadata tracking to support compliance, monitoring, and forensic analysis

These controls reduce the likelihood of data leaks, unauthorized disclosure, and regulatory violations while improving accountability across agent workflows.

What Is AI Agent Governance?

AI agent governance defines the policies, oversight mechanisms, and accountability structures that ensure AI agents operate safely, ethically, and in compliance with organizational and regulatory requirements.

Effective AI agent governance includes:

- Clear ownership of agent behavior and outcomes

- Policy enforcement across agent actions and tool usage

- Auditability of decisions, inputs, and outputs

- Alignment with enterprise AI risk, privacy, and compliance programs

Without governance, even well-secured agents can drift into unsafe or non-compliant behavior over time.

Learn More: AI Agent Governance: Managing the Next Generation of Intelligent Automation

How to Secure AI Agents

Securing AI agents requires a layered approach that extends beyond traditional application security.

Core controls include:

- Strong agent identity and authentication mechanisms

- Role-based and attribute-based access controls for tools and data

- Guardrails that validate inputs, outputs, and decisions

- Continuous monitoring and automated response workflows

Security should be enforced both before execution (policy checks, permissions) and during runtime (behavioral monitoring, anomaly detection).

Learn More: Securing AI Agents: How to Protect Autonomous Systems from Emerging Cyber Threats

Agentic AI Threat Modeling

Agentic AI threat modeling adapts traditional threat modeling methodologies to account for agent autonomy, reasoning, and tool execution.

This involves:

- Mapping agent workflows, tools, and decision paths

- Identifying abuse scenarios such as prompt injection or tool misuse

- Assessing impact across connected systems and APIs

- Defining mitigations at design time and runtime

By threat modeling agents early, organizations can proactively reduce risk rather than reacting to incidents post-deployment.

Learn More: Agentic AI Threat Modeling: Securing Autonomous AI Systems in Practice

AI Agent Orchestration and Security

AI agent orchestration coordinates how multiple agents interact, share context, and execute tasks. While orchestration improves scalability and efficiency, it also introduces new security considerations.

Key risks include:

- Over-privileged agents inheriting excessive access

- Unintended data sharing between agents

- Cascading failures across multi-agent workflows

Secure orchestration requires clear separation of duties, scoped permissions, and centralized monitoring across agent interactions.

Learn More: AI Agent Orchestration: Coordinating Intelligent Systems for Complex Workflows

AI Agent Vulnerabilities

AI agent vulnerabilities extend beyond model-level weaknesses and include:

- Prompt injection and indirect prompt manipulation

- Insecure API integrations

- Weak authentication and service account misuse

- Inadequate validation of agent actions and outputs

Understanding these vulnerabilities is critical to designing resilient, enterprise-grade agentic AI systems.

Learn More: AI Agent Vulnerabilities: Understanding and Mitigating Emerging Security Risks

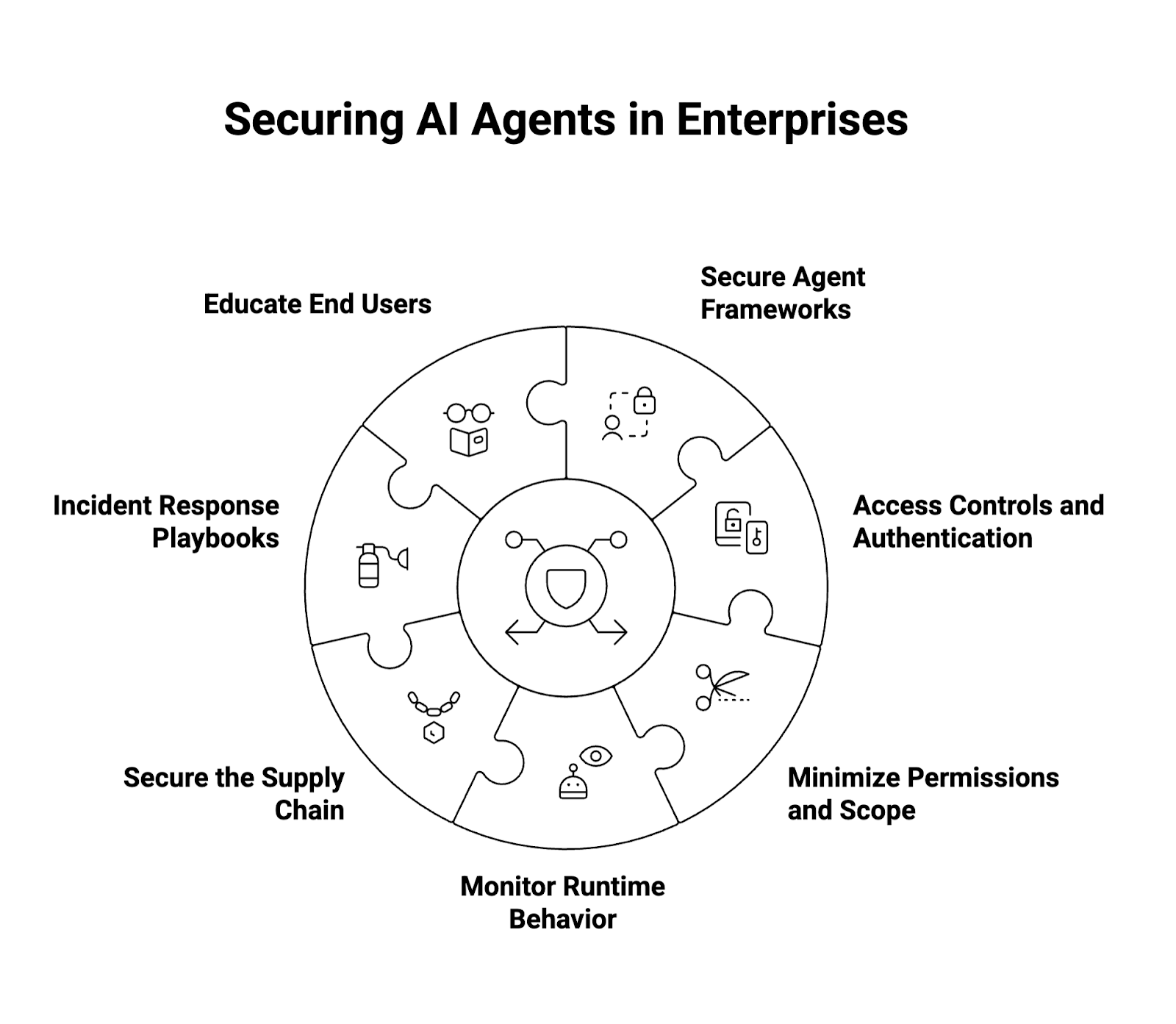

Best Practices for Ensuring the Security of AI Agents in Enterprise Environments

Enterprises adopting agentic AI should implement a defense-in-depth strategy that addresses security across agent workflows, tools, and user interactions. Recommended best practices include:

- Adopt Secure Agent Frameworks

- Choose agent frameworks with built-in security guardrails.

- Favor frameworks with strong community support and frequent security patches.

- Enforce Strong Access Controls and Authentication

- Implement role-based permissions for tools, APIs, and sensitive data.

- Require multi-factor authentication (MFA) for both users and agent services.

- Minimize Permissions and Scope

- Follow least privilege principles for APIs and endpoints.

- Regularly audit permissions to prevent privilege escalation.

- Monitor Runtime Behavior Continuously

- Deploy observability solutions to track real-time agent actions.

- Detect anomalies such as unusual credential use or automation loops.

- Secure the Supply Chain

- Vet all open-source dependencies used in agent frameworks.

- Maintain patch management workflows to address known vulnerabilities.

- Establish Incident Response Playbooks

- Prepare automated mitigation for compromised agent actions.

- Integrate AI agent security into broader enterprise cybersecurity response frameworks.

- Educate End Users

- Train employees to recognize phishing attempts, prompt injection risks, and risky outputs.

- Reinforce responsible use of agentic AI systems.

Conclusion

AI agents are reshaping enterprise automation, workflows, and decision-making, but they also expand the attack surface in ways that traditional chatbots and AI systems do not. As organizations integrate these tools into business-critical processes, AI agent security must be prioritized.

By combining strong access controls, runtime monitoring, zero-trust principles, and secure frameworks, enterprises can build secure AI agents capable of driving innovation while protecting sensitive information and mitigating real-world risks.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.