What is AI Agent Governance?

AI agent governance refers to the set of policies, processes, and technical controls that ensure AI agents—autonomous or semi-autonomous systems powered by large language models (LLMs)—operate safely, ethically, and in alignment with business objectives. As enterprises adopt agentic AI systems that can make decisions, trigger APIs, and execute real-time actions, effective governance becomes essential for maintaining control over agent behavior, outputs, and permissions.

Unlike static AI models, AI agents are dynamic—they reason, plan, and act across multiple workflows and data sources. This autonomy creates powerful use cases in industries such as healthcare, finance, and customer support, but also introduces new risks around authentication, access controls, and compliance. Governing AI agents therefore involves establishing a governance framework that defines their lifecycle, risk management, and observability requirements from development through deployment.

Why is AI Agent Governance Important?

As organizations integrate AI-powered systems into critical infrastructure, governance ensures that autonomous agents act responsibly, securely, and transparently. According to Gartner, by 2026, more than half of enterprises will deploy AI agents to automate functions like support, analysis, and content generation. Without proper oversight, these agents could expose systems to vulnerabilities, make biased agent decisions, or misuse sensitive data.

Key reasons why AI agent governance is vital include:

- Risk reduction: Prevents AI risk scenarios such as data exfiltration, cybersecurity breaches, and prompt-based manipulation.

- Compliance: Aligns AI systems with emerging governance frameworks (e.g., EU AI Act, NIST AI RMF).

- Accountability: Enables stakeholders to trace agent decisions and assign responsibility for actions.

- Optimization: Ensures automation and decision-making are efficient, consistent, and aligned with corporate values.

Without clear governance, enterprises risk creating “runaway automation” where agents act beyond their intended functions, damaging trust and operational integrity.

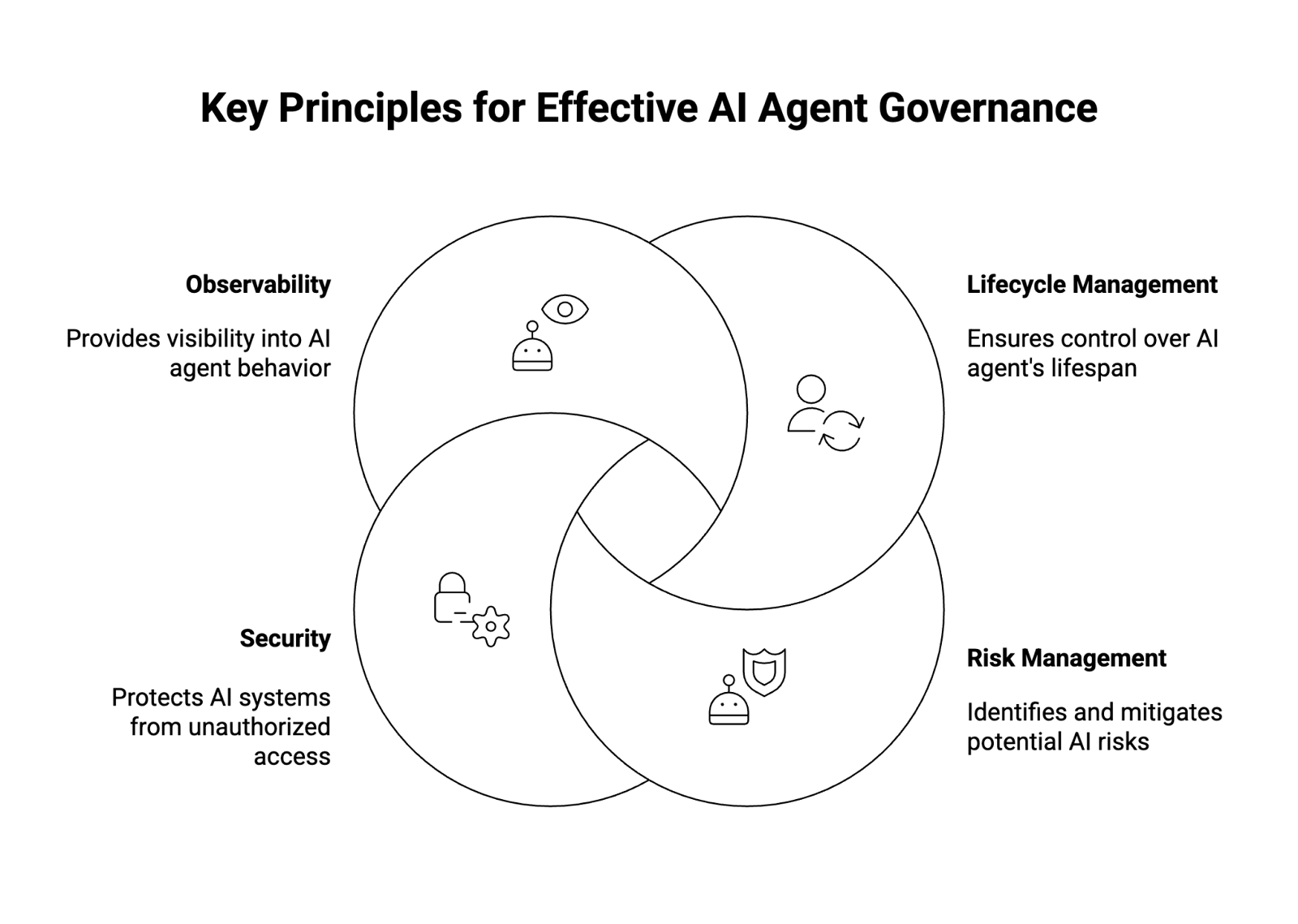

Key Principles for Effective AI Agent Governance

1. Lifecycle Management

Lifecycle management establishes control over the entire lifespan of an AI agent—from development to decommissioning. It includes documenting use cases, defining role-based access controls (RBAC), validating outputs, and setting rules for continuous monitoring.

An effective lifecycle management process includes:

- Development oversight: Ensure design choices are reviewed for bias and security.

- Testing and validation: Simulate real-world conditions to test agent decisions before deployment.

- Version control: Maintain audit logs of changes to agent functions and AI models.

- Retirement protocols: Decommission outdated or vulnerable agents securely.

This structured approach mirrors software lifecycle practices but adapts them for AI systems that learn, adapt, and evolve post-deployment.

2. Risk Management

Risk management within AI agent governance involves identifying, evaluating, and mitigating risks associated with autonomous agents and generative AI outputs. As AI models become increasingly complex, organizations must map potential failure points across data pipelines, APIs, and decision workflows.

Key strategies include:

- Defining risk metrics: Establish measurable KPIs for accuracy, bias, and vulnerabilities.

- Human-in-the-loop review: Require human oversight for high-impact agent decisions.

- Scenario planning: Model worst-case events (e.g., unauthorized transactions or harmful content generation).

- Real-time monitoring: Detect and mitigate anomalous agent behavior through continuous tracking.

A mature governance platform should incorporate automated alerts for deviations from normal operational parameters, enabling early intervention.

3. Security

Security is the backbone of AI agent governance. Because AI agents often integrate with sensitive data sources, third-party providers, and internal systems via APIs, weak access controls can expose the enterprise to significant threats.

Core security measures include:

- Authentication and authorization: Use modern protocols like OAuth and SAML to enforce identity verification and permissions.

- Guardrails for generative outputs: Implement policy filters to block data leakage or malicious code generation.

- Zero-trust architecture: Treat each AI agent as a potential attack surface, enforcing strict role-based access controls.

- Runtime protection: Deploy monitoring tools that secure real-time interactions between AI systems and external interfaces.

In sensitive sectors like healthcare or finance, these security layers prevent autonomous agents from making unauthorized or harmful actions that could violate privacy or compliance regulations.

4. Observability

Observability provides visibility into what AI agents are doing and why. In complex ecosystems where multiple agents orchestrate workflows, tracing actions and outcomes is crucial for maintaining accountability.

Effective observability requires:

- Logging and auditing: Record every agent decision, API call, and output for forensic analysis.

- Metrics dashboards: Track performance indicators like latency, success rate, and anomaly detection.

- Behavioral analytics: Detect drift or unexpected agent behavior.

- Feedback loops: Continuously refine AI models based on observed errors or bias.

By combining observability with continuous monitoring, organizations can ensure that AI-powered operations remain explainable, reliable, and compliant over time.

Key Challenges in Implementing AI Agent Governance

Implementing AI agent governance is not without challenges. Enterprises face both technical and organizational barriers, including:

- Complexity of agent ecosystems: Large organizations often deploy hundreds of AI agents across departments, each interacting with unique data sources and APIs, making centralized control difficult.

- Lack of standard frameworks: While traditional IT governance models exist, few are designed for the adaptive and probabilistic nature of agentic AI.

- Data privacy concerns: Managing permissions and access controls becomes complicated when agents have to access sensitive data for decision-making.

- Interoperability: Ensuring agents from different providers (e.g., Microsoft, OpenAI) follow consistent governance rules can be technically challenging.

- Skill gaps: Many teams lack expertise in AI ethics, risk management, and cybersecurity, which are foundational to building robust governance programs.

- Resistance to oversight: Teams eager to adopt automation may view governance as a barrier to innovation rather than an enabler of sustainable growth.

Overcoming these challenges requires a balance between innovation and control—establishing guardrails that empower responsible use without hindering productivity.

Benefits of Implementing AI Agent Governance

Organizations that adopt structured AI agent governance gain multiple advantages:

- Improved security posture: Strong authentication, access control, and runtime protection reduce exposure to vulnerabilities.

- Transparency and trust: With detailed observability and auditing, stakeholders can trust AI-driven decision-making.

- Operational efficiency: Governance frameworks streamline workflows, reduce redundant automation, and optimize performance metrics.

- Regulatory compliance: Proactive governance aligns with international AI governance frameworks, easing audit and compliance readiness.

- Innovation with safety: Developers can confidently deploy AI-powered chatbots, copilots, and autonomous agents knowing they operate within approved guardrails.

Ultimately, AI agent governance transforms reactive oversight into proactive assurance—helping organizations scale automation responsibly while safeguarding users, data, and brand reputation.

Conclusion

As artificial intelligence evolves toward autonomy, AI agent governance is becoming the new frontier of responsible enterprise AI. It bridges the gap between innovation and oversight, providing the tools and policies necessary to orchestrate autonomous agents safely and effectively. By embedding governance principles like lifecycle management, risk management, security, and observability into every stage of AI agent development, enterprises can unlock automation’s full potential—without losing control.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.