As organizations deploy increasingly autonomous AI agents across business-critical workflows, the question of who or what an AI agent can access becomes a core security concern. Unlike traditional applications, AI agents can make decisions, trigger API calls, and interact with sensitive data sources in real time—often without continuous human oversight.

This article explores AI agent access control, why it is essential for enterprise security, how it works in practice, the key challenges organizations face, and best practices for implementing robust access controls to protect modern agentic AI systems.

What Is AI Agent Access Control?

AI agent access control refers to the policies, mechanisms, and enforcement layers that govern how AI agents authenticate, what resources they can access, and which actions they are permitted to perform within AI systems.

At a high level, AI agent access control determines:

- Agent identity – How an AI agent is uniquely identified

- Authentication – How an agent proves its identity (e.g., OAuth, API keys, access tokens)

- Authorization – What permissions the agent has (e.g., read-only vs. write access)

- Scope of access – Which datasets, APIs, endpoints, and apps the agent can interact with

- Context-aware enforcement – How permissions change based on real-time context

Unlike human users, AI agents often operate continuously, invoke functions, and act autonomously—making access control a foundational component of AI agent security.

Why Do AI Agents Need Access Control?

Without strong access controls, AI agents dramatically expand an organization’s attack surface. Because agents can automate decisions and execute actions, misconfigured permissions can quickly lead to data leakage, abuse, or compliance violations.

Key Reasons Access Control Is Critical

- Protect Sensitive Data

AI agents frequently interact with sensitive information, including customer data, internal documents, metadata, and regulated datasets. Unrestricted access increases the risk of data breaches. - Prevent Unauthorized Agent Actions

An agent with excessive permissions can:- Modify production systems

- Call high-risk APIs

- Trigger downstream automation unintentionally

- Reduce Security Risks from Prompt Injection

Attacks such as prompt injection can manipulate agent behavior. Access controls limit what an agent can do even if its reasoning is compromised. - Support Regulatory Compliance

Regulations such as GDPR require strict controls over data access, auditability, and the principle of accountability—requirements that extend to AI-powered agents. - Enable Safe Automation at Scale

As enterprises deploy more bots, chatbots, and AI-driven assistants, access control ensures automation does not outpace governance.

How Does AI Agent Access Control Work?

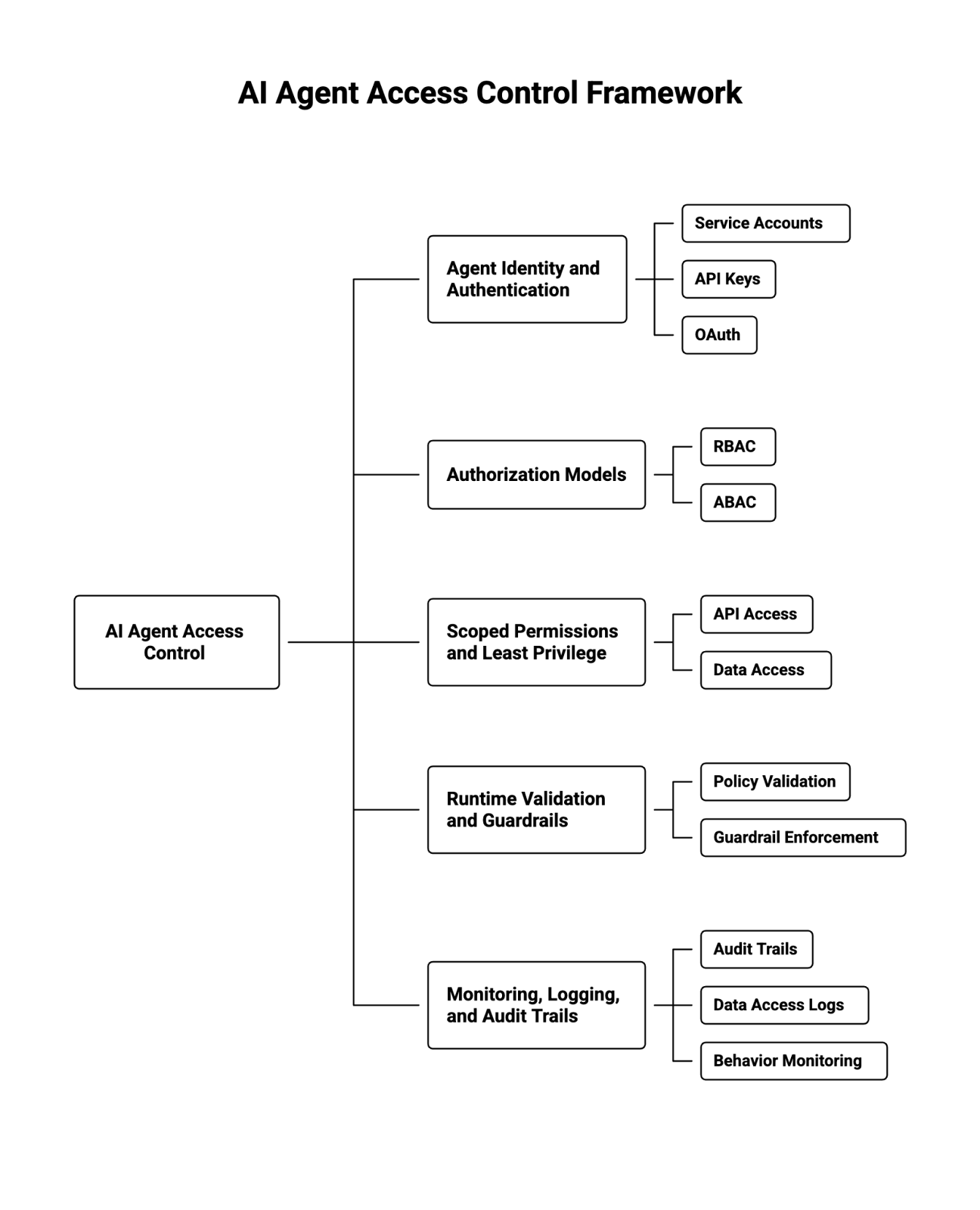

AI agent access control combines identity management, policy enforcement, and runtime validation to govern agent access across systems.

1. Agent Identity and Authentication

Every AI agent must have a distinct identity, separate from human users. Common approaches include:

- Service accounts tied to specific agents

- API keys and access tokens

- OAuth-based authentication flows

- Integration with enterprise identity management systems

This ensures each agent can be uniquely tracked and constrained.

2. Authorization Models: RBAC and ABAC

Once authenticated, agents are authorized using formal access control models:

- Role-Based Access Control (RBAC)

Agents are assigned roles (e.g., “support-agent,” “data-reader”), each with predefined permissions. - Attribute-Based Access Control (ABAC)

Access decisions are made dynamically based on attributes such as:- Agent type

- Data sensitivity

- Environment (prod vs. dev)

- Risk level or use case

ABAC is particularly valuable for context-aware, real-time AI systems.

3. Scoped Permissions and Least Privilege

Effective AI agent access control enforces the principle of least privilege:

- Grant only the minimum permissions required

- Limit access to specific APIs, endpoints, and datasets

- Restrict write or delete actions unless absolutely necessary

This limits blast radius if an agent is compromised.

4. Runtime Validation and Guardrails

Modern AI agent platforms apply access checks at runtime:

- Validate each API call against security policies

- Enforce guardrails on agent actions

- Block unauthorized requests before execution

This is especially important for large language models (LLMs) that dynamically generate actions or API calls.

5. Monitoring, Logging, and Audit Trails

Access control is incomplete without visibility. Enterprises should maintain:

- Detailed audit trails of agent actions

- Logs of data access and API usage

- Monitoring for anomalous agent behavior

These controls support incident response and compliance audits.

Key Challenges in Securing AI Agents

While access control is well understood in traditional IT systems, AI agents introduce unique security challenges.

1. Dynamic and Non-Deterministic Behavior

AI agents do not follow static code paths. Their behavior can change based on prompts, context, or model updates—making it harder to predict access patterns.

2. Over-Permissioned Agents

To “make things work,” teams often grant broad access during development. These permissions frequently persist into production, increasing security risks.

3. Complex Supply Chain Dependencies

AI agents rely on:

- External APIs

- Third-party tools

- Model providers

- Plugins and integrations

Each dependency introduces additional access and supply chain risk.

4. Limited Visibility into Agent Actions

Without proper logging, security teams may not know:

- Which data an agent accessed

- Why a decision was made

- Whether actions were authorized

This undermines accountability.

5. Scaling Access Management

As organizations deploy dozens or hundreds of agents across use cases, managing permissions manually becomes unmanageable without centralized controls.

Best Practices for Implementing Access Control in AI Agents

To secure AI agents effectively, organizations should adopt the following best practices.

1. Establish Strong Agent Identity Management

- Use unique service accounts per agent

- Avoid shared credentials

- Rotate API keys and access tokens regularly

- Integrate agents into enterprise IAM systems

2. Apply the Principle of Least Privilege

- Start with no access by default

- Grant permissions incrementally

- Separate read, write, and execute privileges

- Revoke unused permissions proactively

3. Use RBAC for Simplicity, ABAC for Precision

- Apply RBAC for baseline access control

- Layer ABAC for high-risk or sensitive workflows

- Use attributes like data sensitivity, user context, or environment

4. Enforce Runtime Guardrails

- Validate every agent action before execution

- Restrict high-risk operations

- Block unauthorized API calls in real time

- Apply output validation to reduce misuse

5. Monitor, Log, and Audit Agent Behavior

- Maintain comprehensive audit trails

- Track agent permissions and actions

- Alert on anomalous or unexpected behavior

- Support forensic analysis after incidents

6. Align Access Control with Security Policies

- Map agent permissions to existing security policies

- Involve security teams early in agent design

- Treat AI agents as first-class identities within your security posture

7. Design for Compliance and Data Protection

- Limit access to regulated data

- Support data minimization

- Enable traceability for compliance reviews

- Prepare for regulatory scrutiny as AI governance evolves

Final Thoughts: Access Control as a Foundation for Secure AI Agents

As enterprises move toward more AI-driven, autonomous systems, AI agent access control is no longer optional. It is a foundational security capability that protects sensitive data, reduces operational risk, and enables safe automation at scale.

Organizations that treat AI agents like traditional applications—or worse, like anonymous tools—will struggle with visibility, compliance, and trust. By implementing strong identity management, least-privilege permissions, and real-time guardrails, security teams can confidently deploy and secure AI agents across the enterprise.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.