As organizations move beyond single-purpose models toward autonomous and adaptive AI systems, governance requirements are changing rapidly. Traditional AI governance frameworks—designed for static models and offline decision-making—are increasingly insufficient for agentic AI systems that plan, act, and interact across complex environments in real time.

This article provides a structured, enterprise-ready overview of the agentic AI governance framework: what it is, why it matters, and how it enables responsible, auditable, and scalable AI adoption in high-stakes environments.

What Is an Agentic AI Governance Framework?

An agentic AI governance framework is a set of governance models, controls, and safeguards designed specifically for AI agents—systems capable of autonomous decision-making, tool use, and multi-step execution.

Unlike traditional AI governance, which focuses primarily on model development and deployment, agentic AI governance spans the entire AI lifecycle, including:

- Agent design and intent definition

- Runtime decision-making and agent actions

- Human oversight and human-in-the-loop controls

- Continuous monitoring and risk assessment

- Attribution, explainability, and auditability of outputs

The goal is to ensure that autonomous AI systems remain aligned with organizational objectives, regulatory compliance requirements, and responsible AI principles—even as they adapt in real time.

What Is an AI Agent?

An AI agent is a software-based system that uses artificial intelligence—often powered by LLMs and other AI models—to perform complex tasks autonomously.

Unlike traditional AI, which typically produces a single output in response to an input, AI agents can:

- Plan and sequence actions across workflows

- Call APIs and interact with multiple data sources

- Make decisions based on real-time context

- Execute automation without direct human intervention

Examples of AI agents in real-world enterprise use cases include:

- Autonomous cybersecurity triage agents

- Healthcare workflow automation agents

- AI-driven customer support and virtual assistants

- Data analysis agents operating across enterprise ecosystems

Because these systems act independently, agent actions introduce new risks that require governance strategies purpose-built for autonomy.

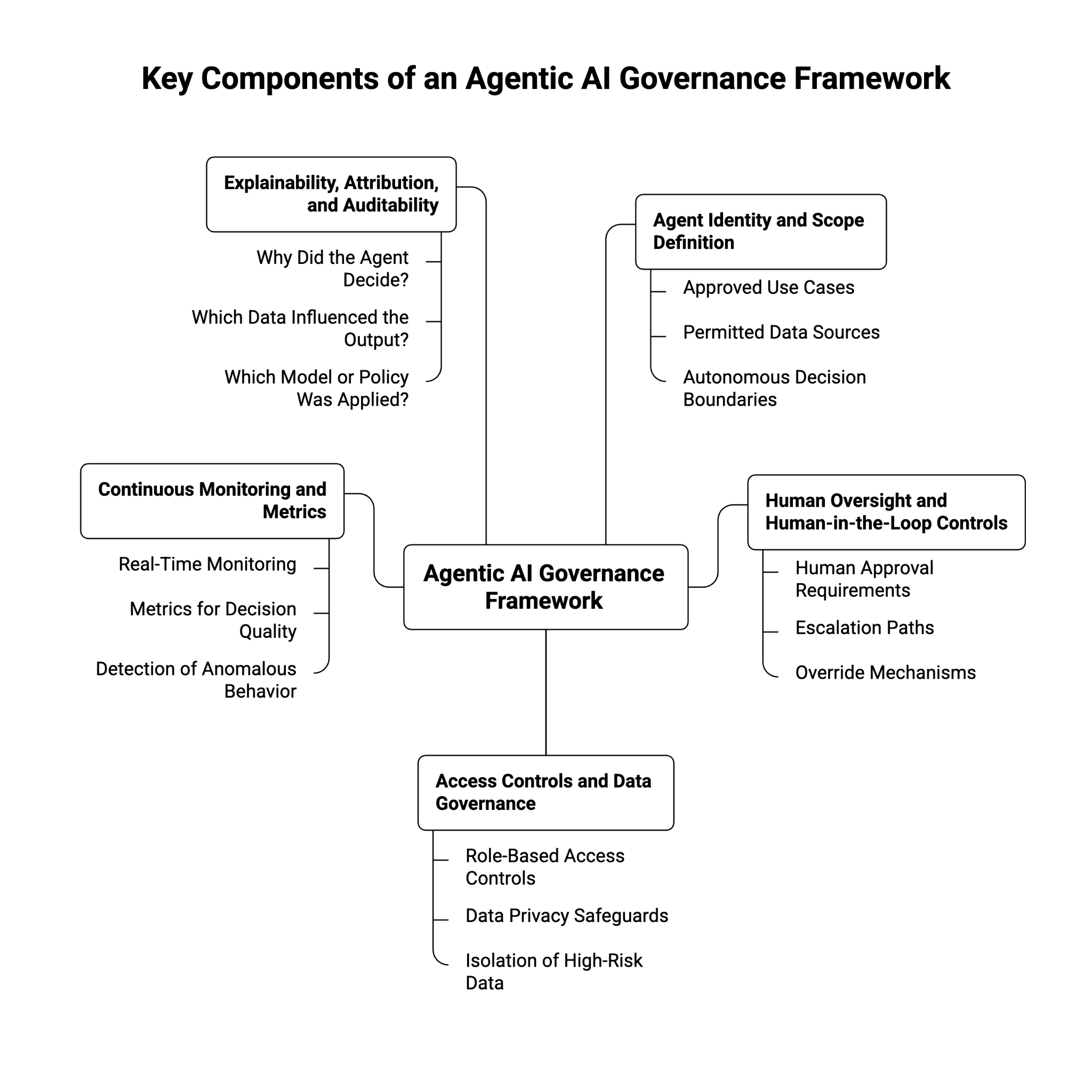

What Are the Key Components of an Agentic AI Governance Framework?

A robust agentic AI governance framework is end-to-end, covering both design-time and runtime controls. Key components include:

1. Agent Identity and Scope Definition

Governance begins by defining what each AI agent is allowed to do:

- Approved use cases and workflows

- Permitted data sources and APIs

- Boundaries for autonomous decision-making

Clear scope definition reduces vulnerabilities and limits unintended behavior.

2. Human Oversight and Human-in-the-Loop Controls

Not all decisions should be fully autonomous. Effective governance frameworks define:

- When human approval is required

- Escalation paths for high-risk or high-stakes decisions

- Override mechanisms for unsafe outputs

This balance enables automation while preserving accountability.

3. Access Controls and Data Governance

Agentic AI systems often interact with sensitive data. Governance frameworks must enforce:

- Role-based and attribute-based access controls

- Data privacy safeguards for regulated environments

- Isolation of high-risk data sources

This is especially critical in healthcare, cybersecurity, and enterprise AI deployments.

4. Continuous Monitoring and Metrics

Static compliance checks are insufficient. Agentic AI governance requires:

- Real-time monitoring of agent actions

- Metrics for decision quality, risk exposure, and drift

- Detection of anomalous or unauthorized behavior

Continuous monitoring ensures governance adapts as agents evolve.

5. Explainability, Attribution, and Auditability

For regulatory compliance and trust, organizations must be able to answer:

- Why did the agent make this decision?

- Which data sources influenced the output?

- Which AI model or policy was applied?

Agentic AI governance frameworks emphasize auditable decision-making across the AI lifecycle.

Why Existing Governance Frameworks Fall Short

Traditional AI governance frameworks were built for non-autonomous systems. They often assume:

- Static models deployed in isolation

- Predictable, one-off outputs

- Limited interaction with external systems

Agentic AI systems break these assumptions.

Key gaps include:

- Lack of runtime governance for real-time decision-making

- Insufficient controls for autonomous agents executing workflows

- Poor attribution across multi-step agent actions

- Limited coverage of emergent behaviors and adaptive logic

As a result, organizations relying solely on traditional AI governance face elevated AI risk when deploying autonomous agents.

How Does an Agentic AI Governance Framework Enhance AI Systems?

Implementing an agentic AI governance framework strengthens AI systems across multiple dimensions:

Improved Risk Management

By embedding risk assessment into the AI lifecycle, organizations can:

- Identify high-risk use cases early

- Apply safeguards proportionate to impact

- Reduce exposure from uncontrolled automation

Scalable and Adaptive Governance

Agentic governance frameworks are designed to scale across:

- Multiple AI agents

- Diverse ecosystems and data sources

- Rapidly evolving generative AI capabilities

This supports enterprise-wide AI adoption without sacrificing control.

Enhanced Trust and Stakeholder Confidence

Clear governance models increase confidence among:

- Internal stakeholders and leadership

- Regulators and auditors

- Customers impacted by AI-driven decisions

Trust becomes a competitive advantage, not a blocker.

Optimized Decision-Making

With explainability and attribution built in, organizations can:

- Continuously improve agent performance

- Optimize workflows based on metrics

- Align AI-driven outputs with business objectives

How Does the Agentic AI Governance Framework Address Ethical Concerns?

Ethical risks increase as AI systems gain autonomy. Agentic AI governance directly addresses these concerns through structured controls.

Responsible AI by Design

Ethical considerations are embedded into governance strategies, not added later. This includes:

- Bias evaluation across agent decision-making

- Safeguards against harmful or unsafe outputs

- Alignment with responsible AI initiatives

Regulatory Compliance for High-Risk AI

Agentic AI governance frameworks help organizations align with emerging regulations, including the EU AI Act and guidance from NIST.

This is particularly important for:

- High-risk AI systems

- Autonomous AI systems impacting individuals

- AI used in regulated sectors like healthcare and cybersecurity

Human Accountability and Oversight

By maintaining human-in-the-loop mechanisms, governance frameworks ensure:

- Humans remain accountable for AI outcomes

- Ethical judgment is preserved in high-stakes scenarios

- AI agents augment—not replace—human decision-making

Why Agentic AI Governance Is Becoming a Business Imperative

As AI-driven automation expands, organizations without strong governance frameworks face:

- Increased regulatory and compliance risk

- Data privacy violations involving sensitive data

- Loss of control over autonomous agent behavior

Conversely, enterprises that invest in agentic AI governance gain:

- Safer and more predictable AI systems

- Faster, more confident AI adoption

- A durable competitive advantage in AI-driven markets

Agentic AI governance is no longer optional—it is foundational to responsible, scalable AI deployment.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.