What is Agentic AI Security?

Agentic AI security refers to the discipline of safeguarding autonomous agents—AI systems capable of independent decision-making and action—against cybersecurity threats, misuse, and unintended outcomes. Unlike traditional software, AI agents interact dynamically with APIs, data sources, and users in real time, executing functions that can impact business operations, data integrity, and security posture.

An AI agent may use large language models (LLMs) or generative AI (GenAI) systems to automate workflows, interpret user intent, and act across software environments. For instance, an agent might analyze customer requests, pull data from SaaS applications, and execute tasks across connected systems. While this autonomy improves efficiency, it also expands the attack surface—creating new vectors for prompt injection attacks, data leaks, and privilege escalation.

Agentic AI security focuses on embedding security measures, guardrails, and continuous monitoring into the full lifecycle of autonomous agents. This ensures that AI systems remain safe, compliant, and aligned with organizational objectives.

What are the Security Risks with AI Agents?

AI agents operate within complex, interconnected ecosystems—accessing APIs, processing sensitive information, and triggering automated actions. This creates multiple security risks that traditional cybersecurity controls often fail to address.

1. Prompt Injection Attacks

Prompt injection attacks manipulate the agent’s instructions or LLM responses to override intended behavior. Malicious actors can exploit natural language prompts to force unauthorized actions—such as exfiltrating data or altering outputs.

2. Unrestricted Permissions

Many agent systems are granted excessive access to internal APIs or datasets. Without proper role-based access control (RBAC) or least privilege enforcement, attackers can leverage a single compromised agent to gain control over multiple connected services.

3. Sensitive Data Exposure

AI agents frequently process sensitive data—including intellectual property, credentials, or customer information. Poor validation or insufficient masking of inputs and outputs can lead to data leaks or unintentional disclosure.

4. Supply Chain and Dependency Risks

Autonomous agents often rely on open-source frameworks, external LLM APIs, or third-party integrations. Each dependency introduces a potential point of compromise—especially if the providers lack robust AI agent security practices.

5. Agent Identity and Authentication Gaps

Without clear agent identity management or authentication protocols, it becomes difficult to verify which agent performed a given action—weakening audit trails and incident response capabilities.

6. Model Manipulation and Drift

Malicious data poisoning or unauthorized fine-tuning can alter agent behavior, leading to unpredictable or harmful outputs. These risks highlight the need for continuous validation and runtime monitoring.

What Makes Securing AI Agents Difficult?

Securing AI agents is inherently complex due to their dynamic, adaptive, and distributed nature. Several structural challenges make traditional cybersecurity models insufficient for this new paradigm.

1. Unpredictable Decision-Making

Autonomous systems powered by LLMs exhibit probabilistic behavior—making their decision pathways difficult to predict or fully audit. This increases the difficulty of enforcing deterministic security controls.

2. Expanded Attack Surface

Each API call, plugin, or external dataset increases the attack surface. Agents that integrate across SaaS platforms or perform real-time automation must be protected against lateral movement and chained exploits.

3. Opaque Workflows

AI agent workflows often operate as black boxes, where internal reasoning and decision processes are not transparent. This limits observability, complicating efforts to detect anomalies or malicious agent actions.

4. Rapid Evolution of the Threat Landscape

The threat landscape surrounding GenAI and LLM agents evolves faster than most organizations can adapt. Attackers continuously discover new prompt injection methods, jailbreaks, and model manipulation strategies.

5. Lack of Standards and Governance

Few official frameworks exist to define agentic AI security best practices. This leaves enterprises to develop their own ad hoc controls, resulting in inconsistent security postures across AI deployments.

How Can Businesses Start Securing AI Agents?

Implementing security for AI agents requires a layered, proactive approach—combining governance, technical controls, and human oversight. The following steps provide a roadmap for businesses beginning their AI agent security journey.

1. Identify All Active and Planned AI Agents

Start with an AI inventory—document all autonomous agents, their functions, and their data connections. This visibility forms the foundation for risk assessment and policy enforcement.

2. Assess Permissions and Access Levels

Apply the principle of least privilege (PoLP) and establish RBAC across APIs, datasets, and services. Each agent should have only the permissions required to perform its assigned functions—nothing more.

3. Implement Strong Authentication and Identity Controls

Use agent identity management to authenticate agents as distinct entities. Multi-factor authentication, signed API requests, and secure token rotation should be enforced across all systems.

4. Integrate Runtime Monitoring

Deploy runtime protection mechanisms to monitor agent behavior in real time. This helps detect deviations, malicious commands, or policy violations before they cause damage.

5. Establish an Incident Response Playbook

Develop an incident response playbook specific to AI agents. Include procedures for isolating compromised agents, revoking tokens, and performing post-incident forensic validation.

6. Conduct Red Teaming Exercises

AI-specific red teaming allows security teams to simulate adversarial conditions—testing the resilience of agentic systems against prompt injections, data poisoning, and misconfiguration exploits.

What Are the Best Practices for Securing AI Agents Against Cyber Threats?

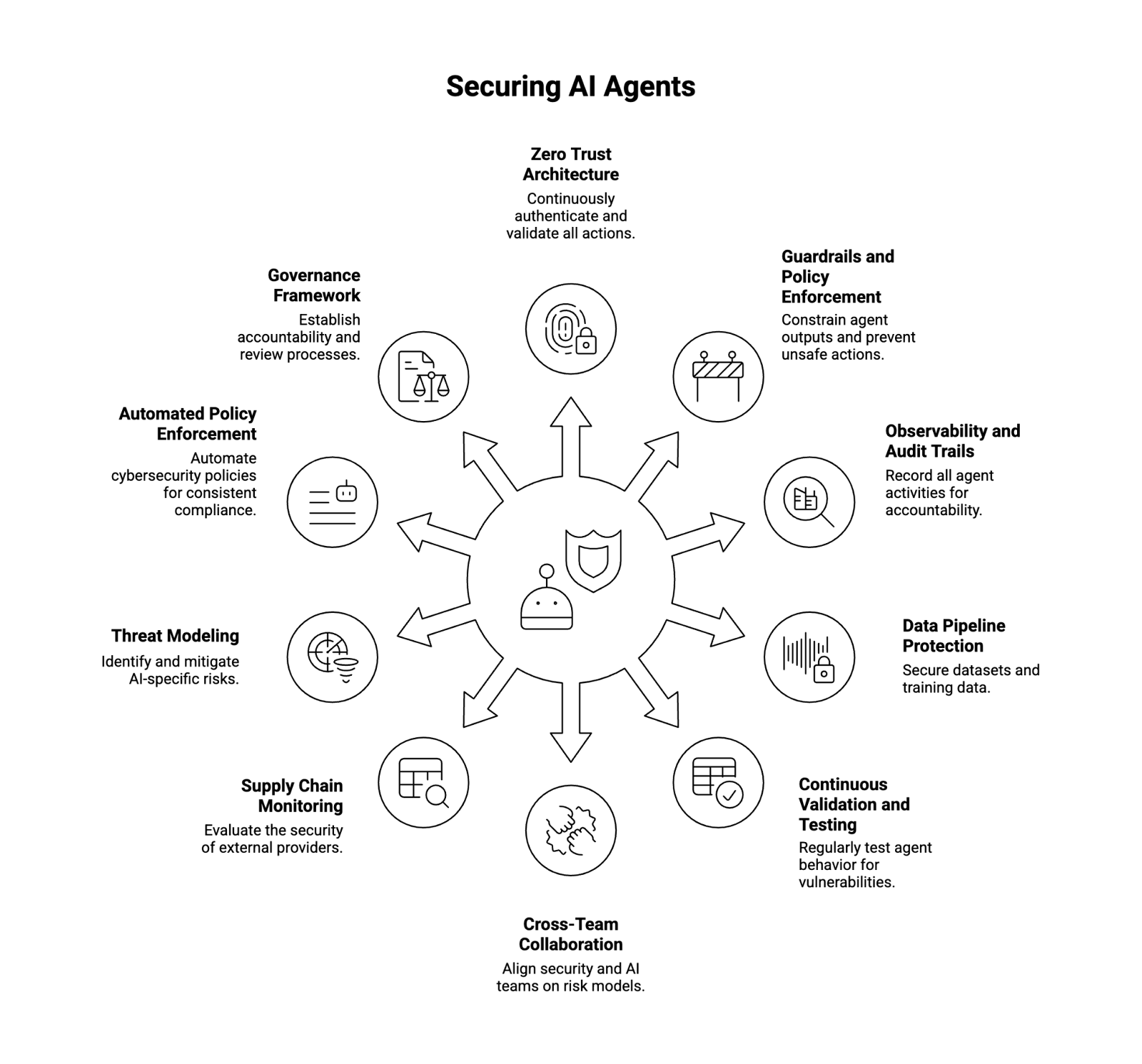

Securing AI agents requires a holistic framework that spans people, processes, and technology. Below are key best practices to maintain strong AI agent security in enterprise environments.

1. Adopt a Zero Trust Architecture

Implement Zero Trust principles—never assume trust based on network location or prior behavior. Continuously authenticate and validate every agent action, user request, and data transaction.

2. Implement Guardrails and Policy Enforcement

Deploy guardrails that constrain agent outputs and prevent access to restricted domains or sensitive data. Use contextual filters to block unsafe or noncompliant actions in real time.

3. Enhance Observability and Audit Trails

Record every agent request, decision, and output. Comprehensive audit trails are essential for accountability, compliance, and forensic analysis.

4. Protect the Data Pipeline

Secure datasets and training data used by AI models. Implement encryption, differential privacy, and validation checks to mitigate data poisoning and data leak risks.

5. Continuously Validate and Test Agents

Perform continuous validation of agent behavior under controlled environments. Regression testing, fuzzing, and adversarial input simulation can help identify vulnerabilities early.

6. Collaborate Across Security and AI Teams

AI agent security is a cross-disciplinary initiative. Security teams, data scientists, and developers should align on risk models, monitoring strategies, and incident escalation workflows.

7. Monitor Supply Chain Dependencies

Evaluate the security maturity of external providers, especially open-source frameworks or hosted LLM APIs. Establish contractual security standards and perform ongoing audits.

8. Integrate Threat Modeling

Adopt threat modeling for AI-specific risks. Map agent data flows, identify sensitive interaction points, and design controls that reduce opportunities for misuse or compromise.

9. Automate Policy Enforcement

Leverage automation to enforce cybersecurity policies across all agent systems—ensuring consistent compliance at scale and reducing human error in sensitive workflows.

10. Develop a Governance Framework

Establish an internal AI governance initiative that defines accountability, metrics, and review processes for all autonomous systems and AI agents.

The Path Forward: Building Trustworthy Agentic AI Systems

As autonomous agents become core components of enterprise automation, the challenge of securing AI agents is shifting from theoretical to operational. Organizations must evolve beyond traditional endpoint security and apply new methods tailored to AI-driven systems—including dynamic validation, runtime protection, and policy-driven access control.

By embracing a defense-in-depth strategy—spanning threat modeling, real-time monitoring, and continuous red teaming—enterprises can reduce vulnerabilities while maintaining innovation. The goal is not just to make AI agents functional, but trustworthy, auditable, and secure by design.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.