Artificial intelligence (AI) is not a one-time implementation—it’s an ongoing process of development, optimization, and refinement. This process, known as the AI lifecycle, defines how AI systems evolve from concept to real-world deployment and continuous improvement. Understanding this lifecycle is crucial for organizations building AI-powered applications, from predictive models to generative AI solutions.

What Is the AI Lifecycle?

The AI lifecycle refers to the structured process of designing, developing, deploying, and maintaining artificial intelligence (AI) systems. Much like the software development lifecycle (SDLC), it provides a methodology for managing each stage of AI model creation—ensuring that systems remain reliable, ethical, and effective over time.

Each phase of the lifecycle—spanning from data collection to monitoring—plays a critical role in improving model performance, safeguarding data privacy, and enabling adaptability as new data and use cases emerge. The process is iterative, meaning models must be continuously refined and retrained as conditions and datasets evolve.

What Are the Stages of the AI Lifecycle?

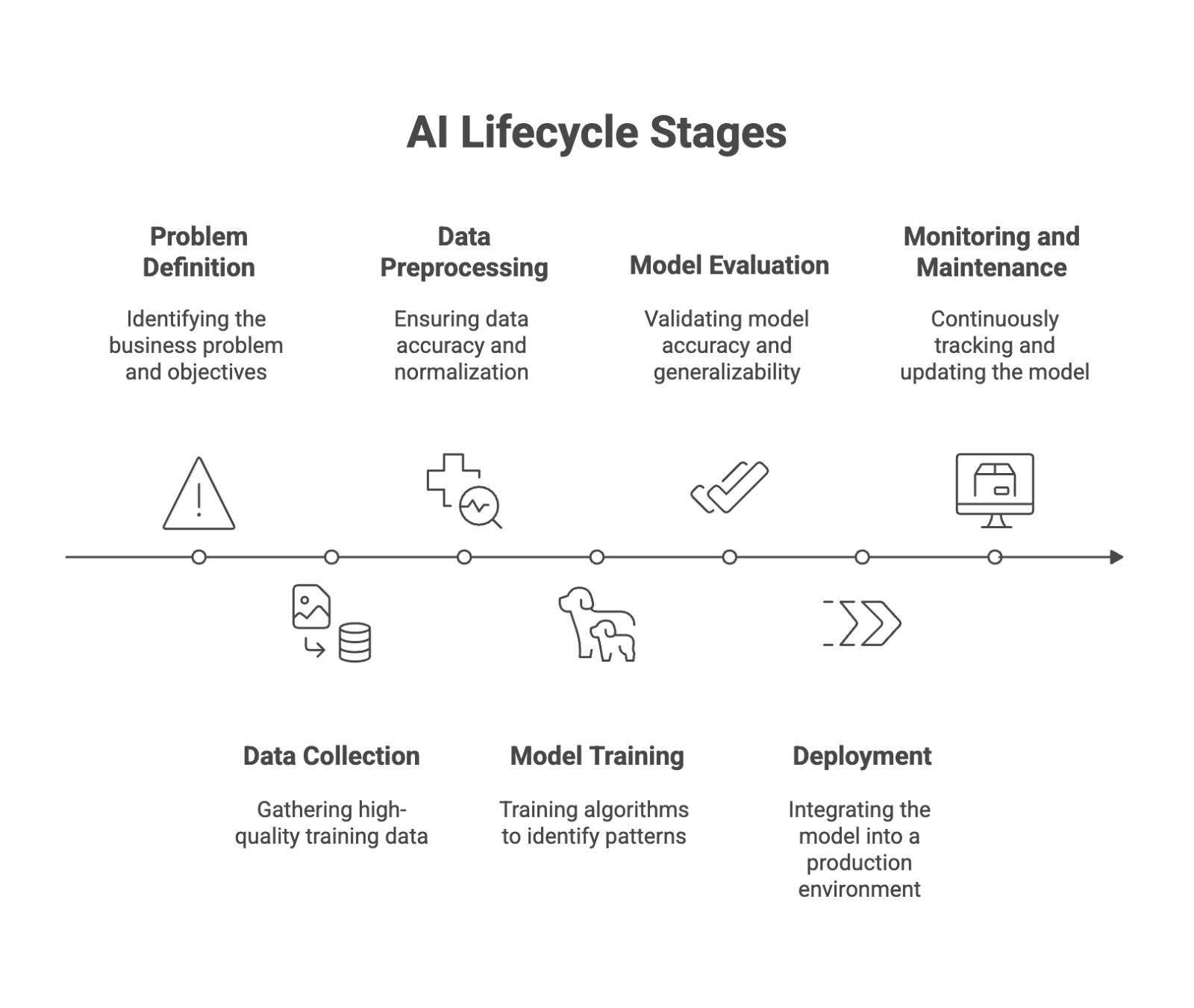

The AI lifecycle can be broken down into seven key stages. Each stage contributes to developing high-quality, explainable, and production-ready AI models that perform consistently in real-world applications.

1. Problem Definition

The first step in the lifecycle is identifying the business problem the AI system will solve. Teams define objectives, success metrics, and scope while engaging key stakeholders to align on expectations and compliance requirements such as GDPR. A clear understanding of goals ensures the chosen algorithms and datasets are relevant to the problem domain.

Key considerations:

- Define measurable performance metrics (e.g., accuracy, precision, recall).

- Identify risks and mitigation strategies for data or ethical concerns.

- Outline success criteria for deployment and maintenance.

2. Data Collection

High-quality training data is the foundation of any AI project. This stage involves gathering data from diverse data sources, including APIs, sensors, and existing systems. Incomplete or biased data can significantly impact model accuracy and trustworthiness.

Best practices:

- Use representative datasets that reflect real-world diversity.

- Manage sensitive information with strict data privacy controls.

- Establish a workflow for collecting real-time and historical data.

3. Data Preprocessing

Raw data often contains missing values, inconsistencies, or noise that can distort model performance. Data preprocessing ensures that input data is accurate, normalized, and ready for model training.

Steps include:

- Handling missing or duplicate records.

- Normalizing and scaling numeric features.

- Encoding categorical data for algorithm compatibility.

- Performing data preparation using automated pipelines for scalability.

This phase often consumes a significant portion of the AI development timeline, but it’s critical to building robust and interpretable models.

4. Model Training

During model training, machine learning algorithms learn from prepared datasets to identify patterns and relationships. The choice of model type—ranging from neural networks and decision trees to LLMs (Large Language Models)—depends on the use case and data complexity.

Key actions:

- Select and train suitable algorithms.

- Use fine-tuning techniques for pre-trained or generative models.

- Optimize parameters to achieve strong evaluation metrics.

This stage often involves extensive experimentation and iteration to identify the model architecture that delivers the best balance between accuracy, interpretability, and computational efficiency.

5. Model Evaluation

Once trained, the model must be validated using testing data to assess its accuracy, generalizability, and resilience to real-world data variations.

Common evaluation metrics include:

- Accuracy – How often predictions match true outcomes.

- Precision and Recall – How effectively the model distinguishes relevant results.

- F1 Score and ROC-AUC – Metrics for imbalanced datasets.

Validation ensures that the model is not overfitted and performs well on unseen data. For regulated industries like healthcare or finance, explainability and fairness testing are also critical.

6. Deployment

After successful validation, the model is integrated into a production environment, becoming part of a real-world AI application. Deployment can take many forms—such as embedding into apps, connecting via APIs, or integrating into automated workflows.

Deployment considerations:

- Use version control for model and dataset tracking.

- Integrate with existing systems through secure APIs.

- Monitor model output for anomalies or drift.

Automated deployment pipelines can streamline model rollout and reduce downtime when retraining or updating models.

7. Monitoring and Maintenance

The lifecycle doesn’t end after deployment. Continuous monitoring and maintenance ensure that the model remains accurate, compliant, and aligned with changing business conditions.

Over time, models can degrade due to data drift or evolving user feedback. Continuous model performance tracking helps detect these changes early.

Maintenance actions include:

- Setting up continuous monitoring for key metrics.

- Retraining with new data to prevent performance decay.

- Implementing feedback loops for real-time optimization.

Why Is the AI Lifecycle Important to Manage?

Effective management of the AI lifecycle ensures that organizations gain long-term value from their AI systems while reducing operational, ethical, and regulatory risks.

Improved Efficiency

By streamlining workflows, teams can automate repetitive tasks like data preprocessing or deployment—freeing up resources for innovation.

Enhanced Accuracy

Consistent retraining and validation help maintain high model performance, minimizing bias and improving prediction reliability.

Cost Savings

Automation and proactive monitoring reduce resource waste from underperforming models or redundant manual processes.

Personalization

Iterative improvement allows AI to adapt to user feedback and deliver tailored experiences across applications—from recommendation engines to healthcare analytics.

Scalability

A well-defined lifecycle supports scalable AI development, enabling the integration of multiple models and use cases within enterprise systems.

How Do You Manage the AI Lifecycle Effectively?

Managing the AI lifecycle effectively requires a structured, iterative development process supported by the right tools and governance.

1. Establish Governance and Documentation

Maintain transparency across all lifecycle stages, documenting datasets, algorithms, and model assumptions for compliance and auditability.

2. Automate Repetitive Tasks

Leverage automated pipelines for data ingestion, model training, and deployment. This minimizes human error and ensures repeatability.

3. Integrate Continuous Monitoring

Deploy tools for real-time tracking of performance metrics and model drift. Continuous monitoring ensures models remain accurate as environments change.

4. Implement Robust Data Privacy Controls

Apply encryption, anonymization, and GDPR-compliant policies when handling sensitive information.

5. Encourage Stakeholder Collaboration

Engage data scientists, engineers, domain experts, and compliance teams throughout the development lifecycle to ensure models align with business objectives and ethical standards.

6. Prioritize Explainability and Trust

Build transparent models that stakeholders can understand and trust. Explainability supports regulatory compliance and responsible AI adoption.

Conclusion

The AI lifecycle is more than a technical roadmap—it’s a strategic framework that ensures AI models remain effective, ethical, and adaptable across their entire lifespan. By understanding and managing each phase—from data collection to continuous monitoring—organizations can develop successful AI systems that scale reliably, optimize operations, and deliver measurable value.

About WitnessAI

WitnessAI is the confidence layer for enterprise AI, providing the unified platform to observe, control, and protect all AI activity. We govern your entire workforce, human employees and AI agents alike, with network-level visibility and intent-based controls. We deliver runtime security for models, applications, and agents. Our single-tenant architecture ensures data sovereignty and compliance. Learn more at witness.ai.